| Hao-Yu Wu1 | Michael Rubinstein1 | Eugene Shih2 | Frédo Durand1 | William T. Freeman1 |

| 1MIT CSAIL | 2Quanta Research Cambridge, Inc. |

|

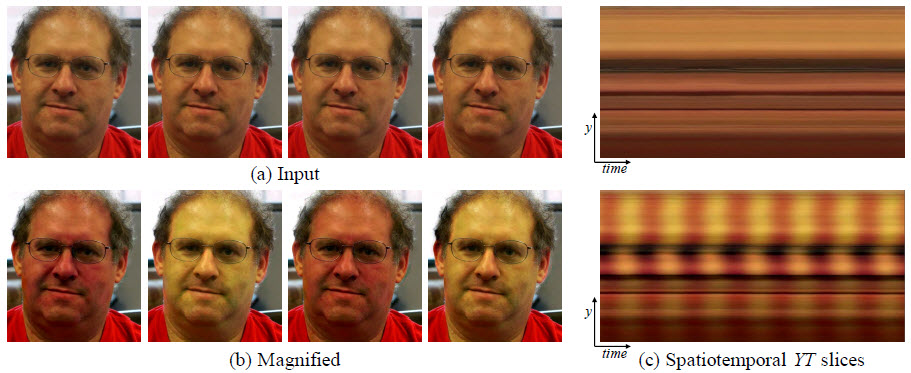

An example of using our Eulerian Video Magnification framework for visualizing the human pulse. (a) Four frames from the original video sequence. (b) The same four frames with the subject's pulse signal amplified. (c) A vertical scan line from the input (top) and output (bottom) videos plotted over time shows how our method amplifies the periodic color variation. In the input sequence the signal is imperceptible, but in the magnified sequence the variation is clear. |

Abstract

Our goal is to reveal temporal variations in videos that are difficult

or impossible to see with the naked eye and display them in

an indicative manner. Our method, which we call Eulerian Video

Magnification, takes a standard video sequence as input, and applies

spatial decomposition, followed by temporal filtering to the

frames. The resulting signal is then amplified to reveal hidden information.

Using our method, we are able to visualize the flow

of blood as it fills the face and also to amplify and reveal small

motions. Our technique can run in real time to show phenomena

occurring at temporal frequencies selected by the user.

@article{Wu12Eulerian, author = {Hao-Yu Wu and Michael Rubinstein and Eugene Shih and John Guttag and Fr\'{e}do Durand and William T. Freeman}, title = {Eulerian Video Magnification for Revealing Subtle Changes in the World}, journal = {ACM Transactions on Graphics (Proc. SIGGRAPH 2012)}, year = {2012}, volume = {31}, number = {4}, }

Supplemental: PDF (the derivation in Appendix A in the paper given in more detail)

SIGGRAPH 2012 Presentation: PPT with videos (150MB)

Visualization of Eulerian motion magnification (Courtesy of Lili Sun)

Videoscope by Quanta Research - upload your videos and have them magnified!

| Michael's PhD thesis: Analysis and Visualization of Temporal Variations in Video |

SIGGRAPH 2012 Supplemental Video:

| Download: mov (220 MB) |

Our NSF SciVis 2012 video "Revealing Invisible Changes In The World":

NSF International Science & Engineering Visualization Challenge 2012

Science Vol. 339 No. 6119 pp. 518-519, February 1 2013

| Download: mov (230 MB) |

Related Publications

Analysis and Visualization of Temporal Variations in Video, Michael Rubinstein, PhD Thesis, MIT Feb 2014

Riesz Pyramids for Fast Phase-Based Video Magnification, ICCP 2014, to appear

Phase-Based Video Motion Processing, SIGGRAPH 2013

Press

| Yedioth Ahronoth (Hebrew) | The Hidden Secrets of Video | ||

| Discovery Channel | Daily Planet | ||

| Daily Mail | How to spot a liar (and cheat at poker) | ||

| NY Times | Scientists Uncover Invisible Motion in Video | Video | |

| Txchnologist | New Video Process Reveals Heart Rate, Invisible Movement | ||

| MIT News | MIT researchers honored for 'Revealing Invisible Changes' | ||

| Wired UK | MIT algorithm measures your pulse by looking at your face | ||

| Technology Review | Software Detects Motion that the Human Eye Can't See | ||

| BBC Radio | MIT Video colour amplification | ||

| Der Spiegel (German) | Video software can make pulse visible | ||

| MIT News | Researchers amplify variations in video, making the invisible visible | Video | Spotlight |

| Imaging Resource | Is baby still breathing? Find out… from a video! | ||

| PetaPixel | Magnifying the Subtle Changes in Video to Reveal the Invisible | ||

| Huffington Post | MIT's New Video Technology Could Give You Superhuman Sight | ||

| Gizmodo | New X-Ray Vision-Style Video Can Show a Pulse Beating Through Skin |

Code and Binaries

- Matlab source code (v1.1, 2013-03-02)

Reproduces all the results in the paper. See the README file for details.

- Executables for 64-bit Windows, 64-bit Linux and 64-bit Mac (v1.1, 2013-09-05)

This is a compiled version of the MATLAB code the can be run from the command line. It doesn't

require any programming or for MATLAB to be installed. Instead, these binaries use the MATLAB Compiler Runtime (MCR), which is free and only takes a couple of minutes to install. See the README file for details.

The code and executables are provided for non-commercial research purposes only. By

downloading and using the code, you are consenting to be bound by all terms

of this software release agreement. Contact

the authors if you wish to use the code commercially.

* This work is patent pending

Please cite our paper if you use any part of the code or videos supplied on this web page.

Tips for recording and processing videos:

At capture time:

- Minimize extraneous motion. Put the camera on a tripod. If appropriate, provide support for your subject (e.g. hand on a table, stable chair).

- Minimize image noise. Use a camera with a good sensor, make sure there is enough light.

- Record in the highest spatial resolution possible and have the subject occupy most of the frame. The more pixels covering the object of interest - the better the signal you would be able to extract.

- If possible, record/store your video uncompressed. Codecs that compress frames independently (e.g. Motion JPEG) are usually preferable over codecs exploiting inter-frame redundancy (e.g. H.264) that, under some settings, can introduce compression-related temporal signals to the video.

When Processing:

- To amplify motion, we recommend our new phase-based pipeline.

- To amplify color, use the linear pipeline (the paper and code in this page).

- Choose the correct time scale that you want to amplify. For example, heart beats tend to occur around once per second for adults, corresponding to 1Hz, and you can amplify content between 0.5Hz and 3Hz to be safe. The narrower the interval, the more focused the amplification is and the less noise gets amplified, but at the risk of missing physical phenomena.

- Don't forget to account for the video frame rate when specifying the temporal passband! See our code for examples.

Data

All videos are in MPEG-4 format and encoded using H.264.

|

|

|

|

|

|

|

|

|

Acknowledgements

We would like to thank Guha Balakrishnan, Steve Lewin-Berlin and Neal Wadhwa for their helpful feedback, and the SIGGRAPH reviewers for their comments. We thank Ce Liu and Deqing Sun for helpful discussions on the Eulerian vs. Lagrangian analysis. We also thank Dr. Donna Brezinski, Dr. Karen McAlmon, and the Winchester Hospital staff for helping us collect videos of newborn babies. This work was partially supported by DARPA SCENICC program, NSF CGV-1111415, and Quanta Computer. Michael Rubinstein was partially supported by an NVIDIA Graduate Fellowship.

Last updated: Feb 2014