Videos

I work in the Humanoid Robotics Group at MIT CSAIL with Prof. Rodney Brooks along with many other great people. This page contains a few video demonstrations of bits and pieces from my own work and collaborations (for background information and more detail, please look here).

| Video: Shadow detection |

|

Coco tracks the shadow of its arm, which allows it to estimate the

time to contact with a surface, even without much texture (where stereo

might fail).

AVI -- (7.4 MB) Length: approximately 20 seconds |

|

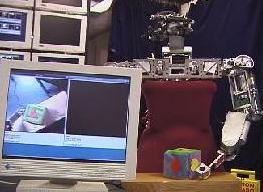

| Video: Object localization |

|

Babybot learns the appearance of objects handed to it, and then can find them

again and pick them up. I was responsible for the object localization

component.

AVI -- (33 MB) Length: a few minutes

Robot's view MOV -- (4 MB) |

|

| Video: Object naming |

|

In this video, Cog is given a name for the objects in its workspace

(a car and a ball). Then, when these names are spoken, the robot

turns back to look at the appropriate object, even though they

have left its field of view. See the next few videos for

how Cog learns to recognize objects in the first place.

Quicktime -- (7.4 MB) Length: approximately 30 seconds |

|

| Video: Active segmentation |

|

Cog taps a toy with his flipper and uses the motion to segment

the object from the background.

Quicktime -- (2.0 MB) Length: approximately 20 seconds |

|

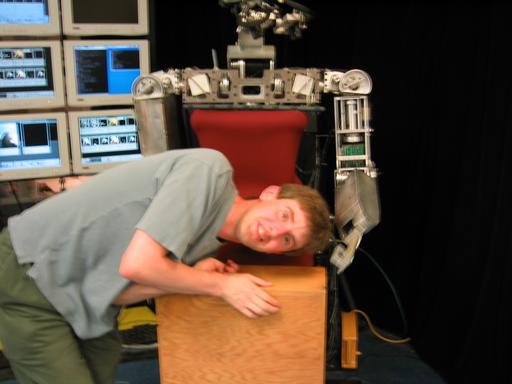

| Video: Head segmentation (the hard way) |

|

Cog uses active segmentation to separate my head out from the

background. The hair and face are correctly grouped.

This movie is an homage to Matt Williamson's

safety demo.

It shows Cog's perspective while batting my head around.

MPEG1 -- (90 kB) Length: approximately 10 seconds |

|

| Video: Open object recognition |

|

In this video, Cog is confronted with a new object (a red ball).

Since it has limited experience at this point, it confuses the ball

with another object (a cube) which has similar color.

Once Cog pokes the object, after about 5 seconds processing it can

correctly distinguish between these objects based on shape.

Quicktime -- (6.7 MB) Length: approximately 1 minute |

|

| Video: Role transfer |

|

Role transfer. Kismet watches human sorting green objects to one

side and yellow objects to other. When prompted, robot says the

direction it expects a new object to go. Quicktime -- (1.5 MB) Length: approximately 1.5 minutes |

|

| Video: Learning through activity |

|

In this video, the operator tells Cog they are going to "find" a "toma".

Then they look at several objects in turn, saying "no" to each.

Finally the operator shows Cog a bottle and says "yes".

Cog then associates the name "toma" with the bottle, by

analogy with previous search episodes.

Quicktime -- (23.5 MB) Length: 1.5 minutes |

|

| Video: Platform Shoe |

|

Using a forward-mounted camera on a shoe, a wearable system

can view the wearer's local environment. When the shoe

is pressed against the ground during walking, the image

is stable and in a simple ground-aligned orientation.

This movie shows the camera's perspective, and some

simple estimation of the phase of walking from visual

information. It is sped up by a factor of about two

relative to real-time.

MPEG1 -- (18.4 MB) Length: 1.5 minutes |

|

| Video: Opening a door |

|

In this video, a robot (Cardea, with a single arm at this point)

locates a door, pushes it open, and goes through -- all autonomously.

I did computer vision stuff for this project.

AVI -- (29 MB) Length: 1.5 minutes |

|

| Video: Conversational turn taking |

|

Speech recognition, generation and eye contact combine to

allow Kismet to engage two people in proto-conversation (where

the structure of a conversation is present, but the words are

just infant-like babble).

Quicktime -- (2.1 MB) Length: approximately 50 seconds |

|