|

Andrew Rouditchenkoemail: roudi AT mit.eduGoogle Scholar | CV available upon request |

I am a PhD student at MIT CSAIL in the Spoken Language Systems Group, advised by Dr. Jim Glass.

I graduated from MIT in 2021 with my M.Eng. in EECS, advised by Dr. Jim Glass and Professor David Harwath.

I graduated from MIT in 2019 with my S.B. in EECS, and I worked with Professor Antonio Torralba

and Professor Josh McDermott.

I am part of the Sight and Sound MIT-IBM Watson AI Lab project through which I've worked with several researchers on multi-modal learning.

I was an intern on the FAIR Seamless team at Meta during summer 2024.

I was fortunate to work with Dr. Tatiana Likhomanenko at Apple's Machine Learning Research Group during summer 2023.

First-Author Publications |

||

|

mWhisper-Flamingo for Multilingual Audio-Visual

Noise-Robust Speech Recognition Andrew Rouditchenko, Samuel Thomas, Hilde Kuehne, Rogerio Feris, James Glass IEEE Signal Processing Letters 2025 Code, models, and Colab Demos Video Presentation Paper |

|

|

Whisper-Flamingo: Integrating Visual Features into Whisper for

Audio-Visual Speech Recognition and Translation Andrew Rouditchenko, Yuan Gong, Samuel Thomas, Leonid Karlinsky, Hilde Kuehne, Rogerio Feris, James Glass Interspeech 2024 Code, models, and Colab Demos Video Presentation Paper |

|

|

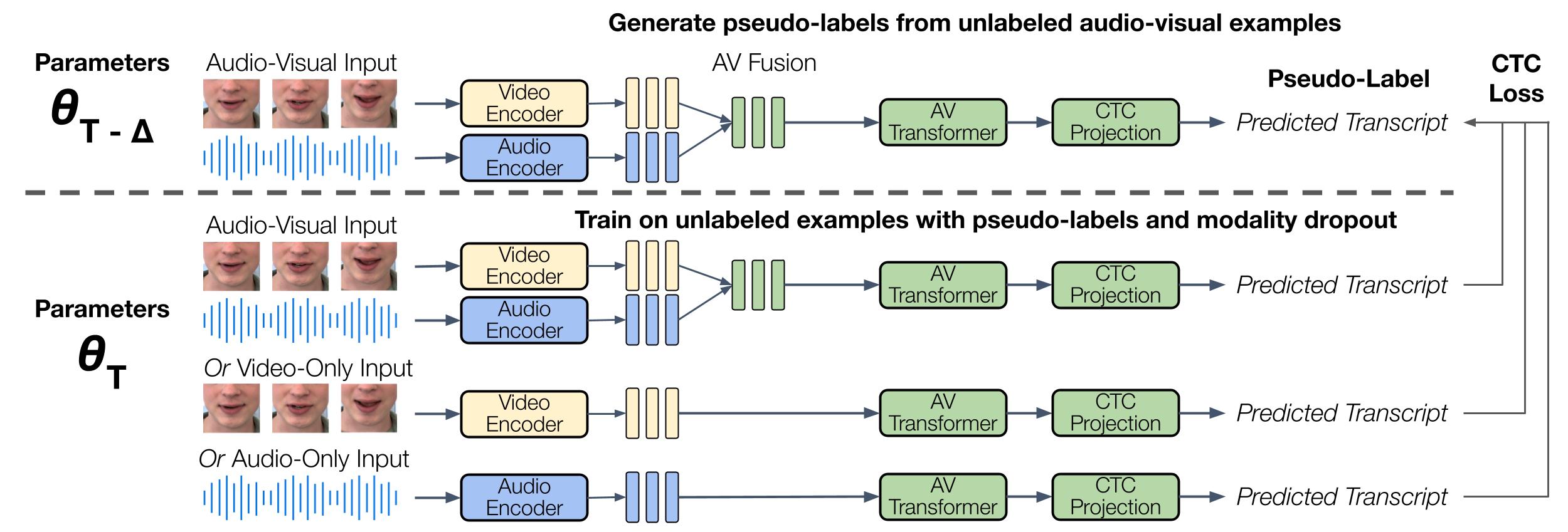

AV-CPL: Continuous Pseudo-Labeling for Audio-Visual Speech Recognition Andrew Rouditchenko, Ronan Collobert, Tatiana Likhomanenko Preprint 2023 Paper |

|

|

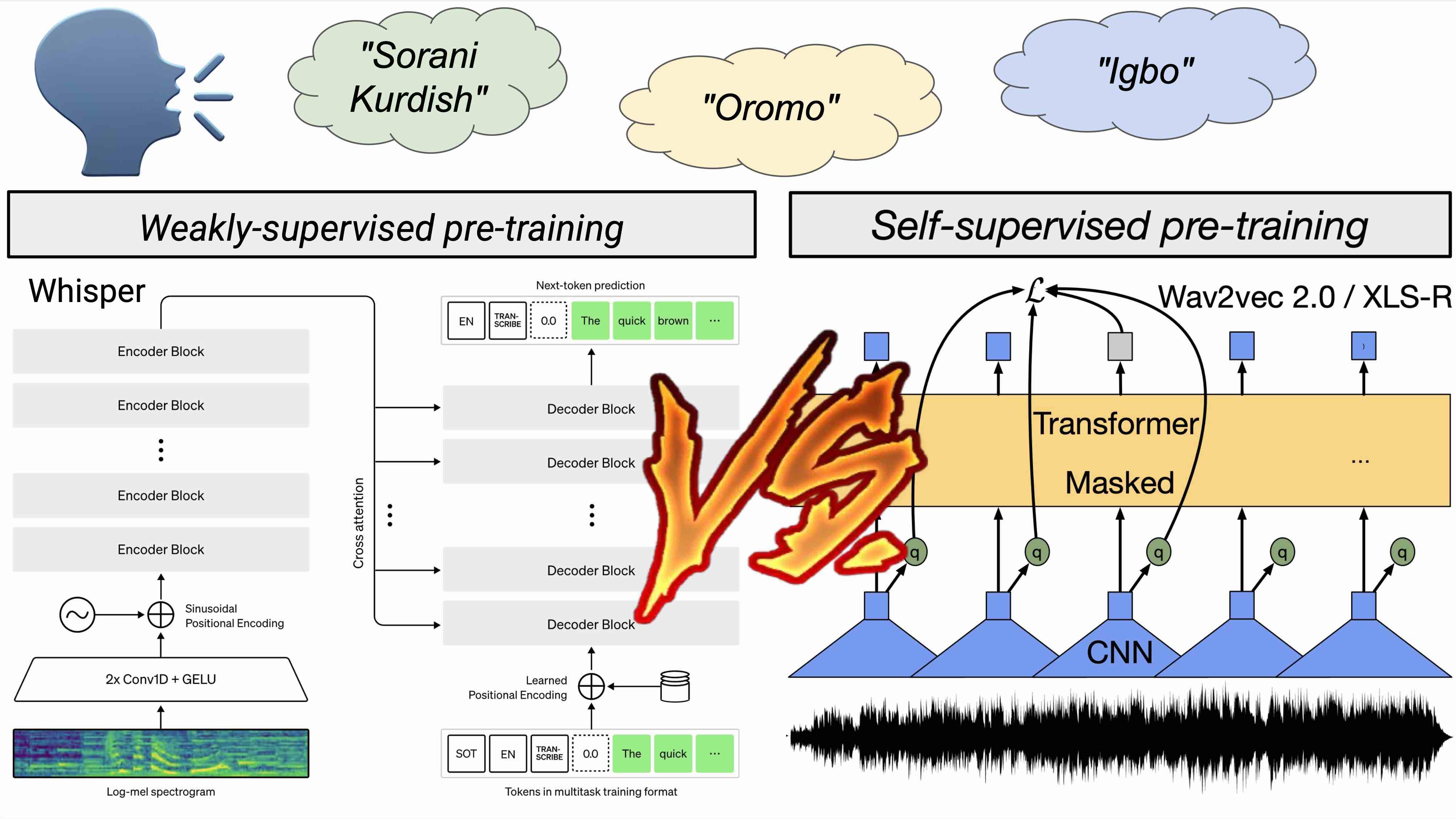

Comparison of Multilingual Self-Supervised and Weakly-Supervised Speech Pre-Training for Adaptation to Unseen Languages Andrew Rouditchenko, Sameer Khurana, Samuel Thomas, Rogerio Feris, Leonid Karlinsky, Hilde Kuehne, David Harwath, Brian Kingsbury, James Glass Interspeech 2023 Video Presentation Paper |

|

|

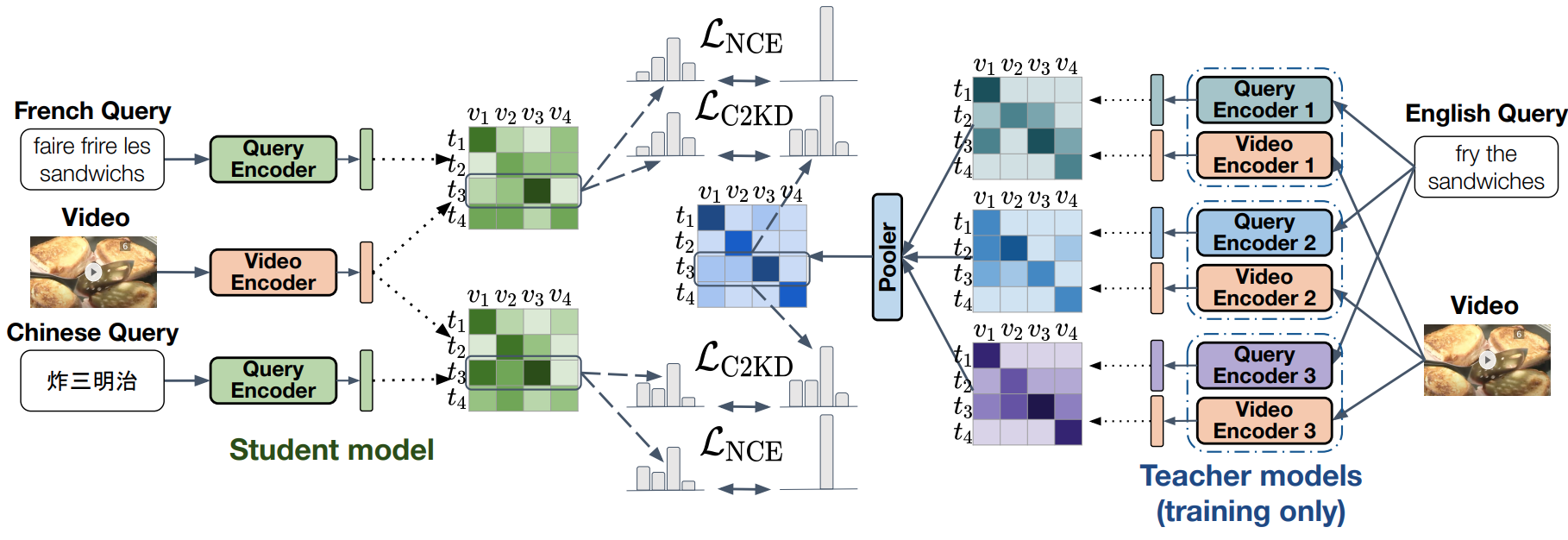

C2KD: Cross-Lingual Cross-Modal Knowledge Distillation for Multilingual Text-Video Retrieval Andrew Rouditchenko, Yung-Sung Chuang, Nina Shvetsova, Samuel Thomas, Rogerio Feris, Brian Kingsbury, Leonid Karlinsky, David Harwath, Hilde Kuehne, James Glass International Conference on Acoustics, Speech and Signal Processing (ICASSP) 2023 Code, dataset, and Colab Demos Video Presentation Paper |

|

|

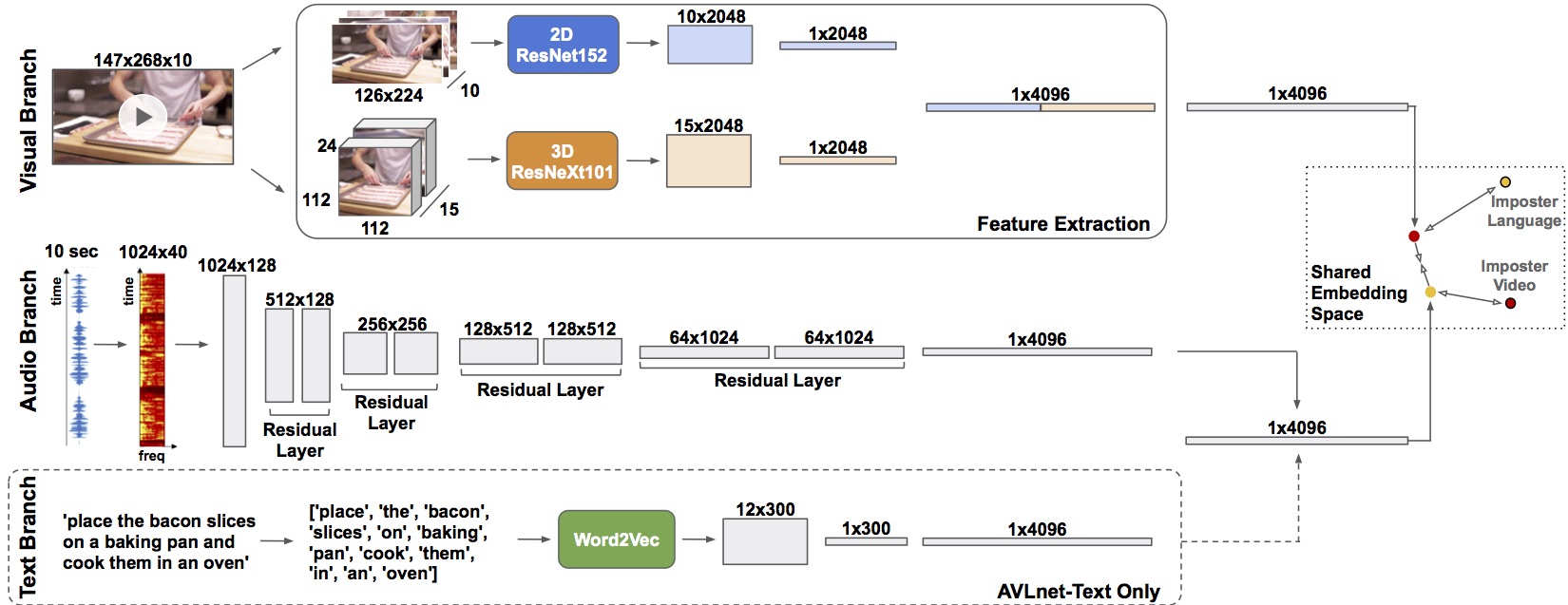

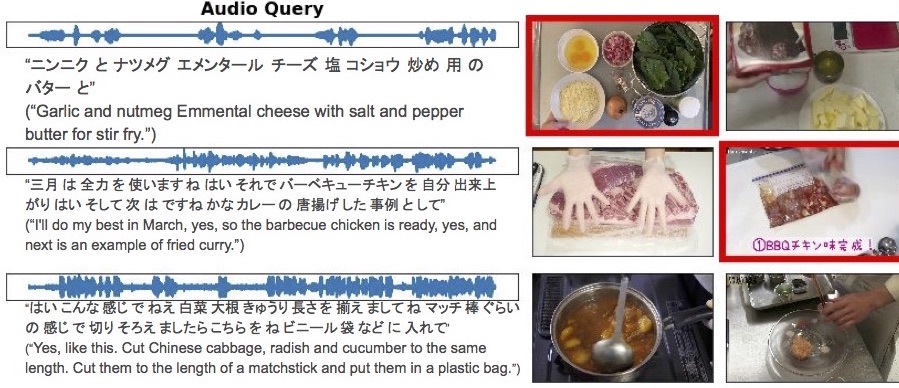

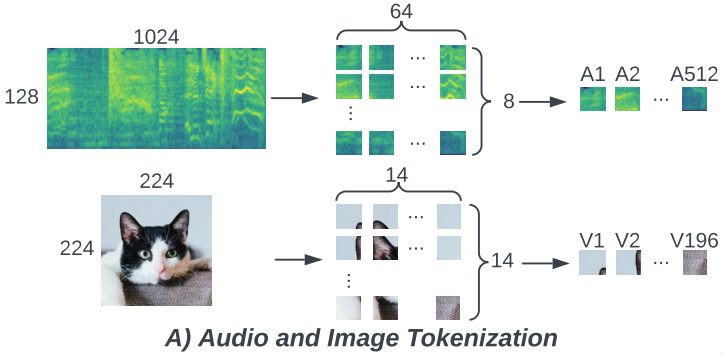

AVLnet: Learning Audio-Visual Language Representations from Instructional Videos Andrew Rouditchenko*, Angie Boggust*, David Harwath, Brian Chen, Dhiraj Joshi, Samuel Thomas, Kartik Audhkhasi, Hilde Kuehne, Rameswar Panda, Rogerio Feris, Brian Kingsbury, Michael Picheny, Antonio Torralba, James Glass Interspeech 2021 Project website with code, data, models, and demo Paper Video Presentation (YouTube) |

|

|

Cascaded Multilingual Audio-Visual Learning from Videos Andrew Rouditchenko, Angie Boggust, David Harwath, Samuel Thomas, Hilde Kuehne, Brian Chen, Rameswar Panda, Rogerio Feris, Brian Kingsbury, Michael Picheny, James Glass Interspeech 2021 Project website with code, data, and models Paper Video Presentation (YouTube) |

|

|

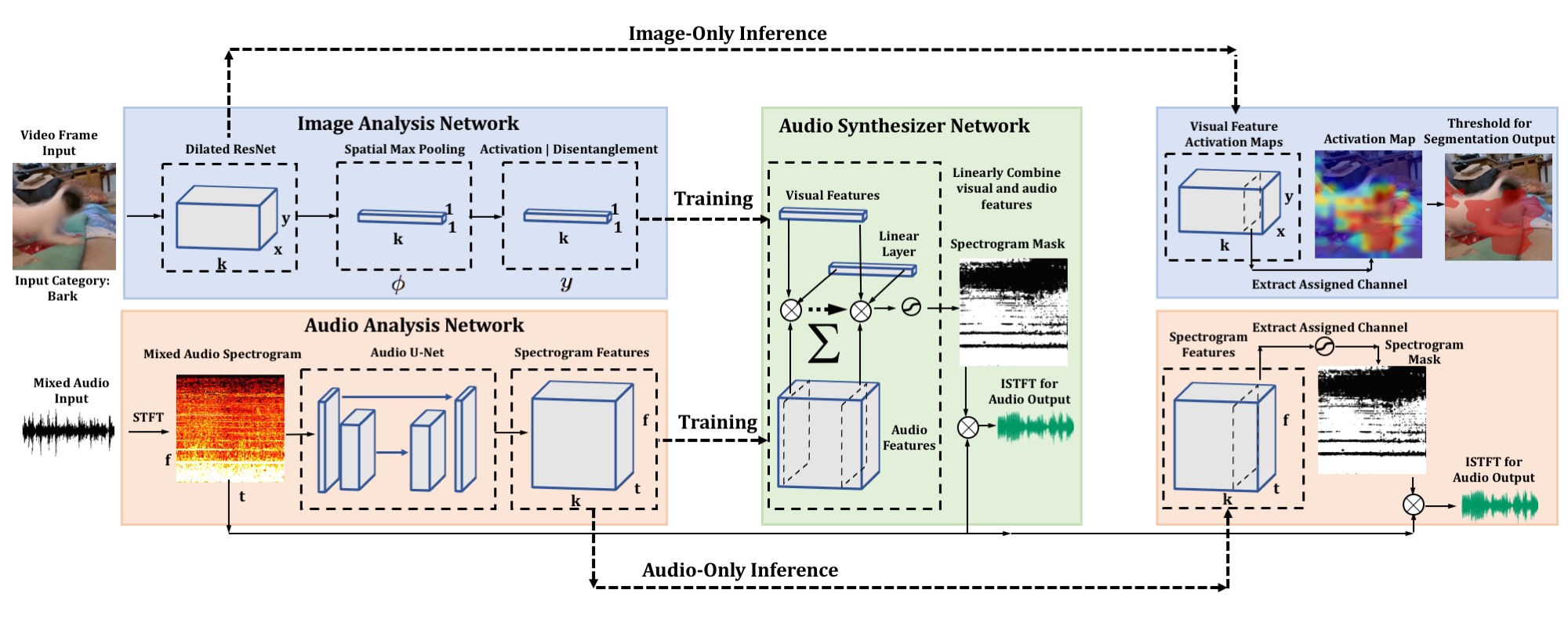

Self-Supervised Audio-Visual Co-Segmentation Andrew Rouditchenko*, Hang Zhao*, Chuang Gan, Josh McDermott, Antonio Torralba International Conference on Acoustics, Speech and Signal Processing (ICASSP) 2019 ICASSP Paper CVPR Multi-Modal Learning from Videos Workshop Paper |

|

Selected Co-Author Publications |

||

|

Contrastive Audio-Visual Masked Autoencoder Yuan Gong, Andrew Rouditchenko, Alexander Liu, David Harwath, Leonid Karlinsky, Hilde Kuehne, James Glass International Conference on Learning Representations (ICLR) 2023 Paper |

|

|

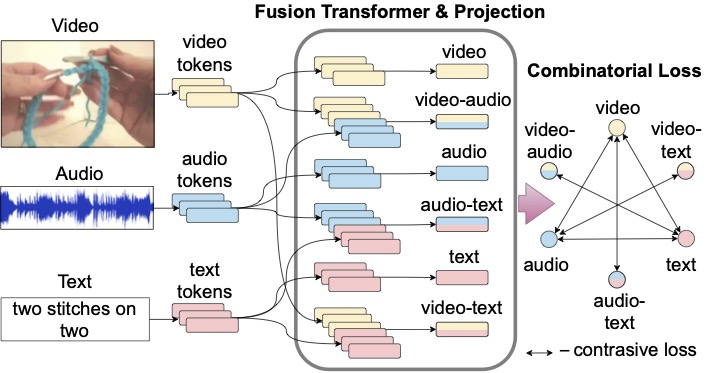

Everything at Once -- Multi-modal Fusion Transformer for Video Retrieval Nina Shvetsova, Brian Chen, Andrew Rouditchenko, Samuel Thomas, Brian Kingsbury, Rogerio Feris, David Harwath, James Glass, Hilde Kuehne Conference on Computer Vision and Pattern Recognition (CVPR) 2022 Paper |

|

|

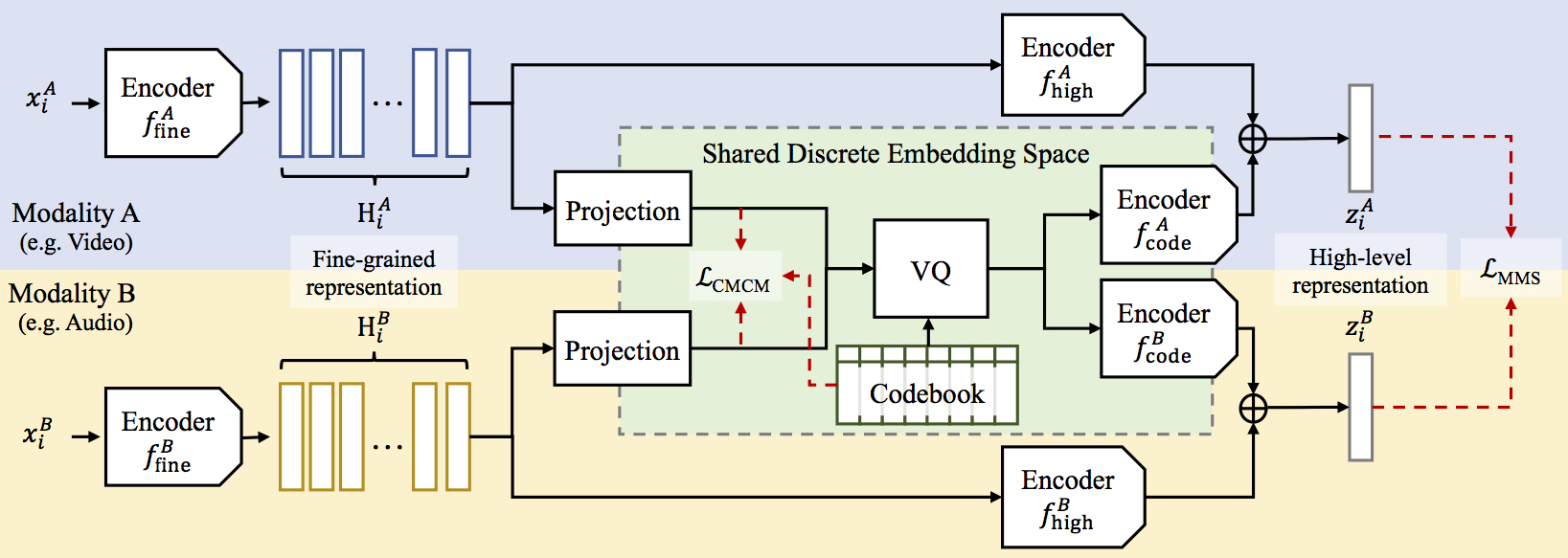

Cross-Modal Discrete Representation Learning Alexander H. Liu, SouYoung Jin, Cheng-I Jeff Lai, Andrew Rouditchenko, Aude Oliva, James Glass Annual Meeting of the Association for Computational Linguistics (ACL) 2022 Paper |

|

|

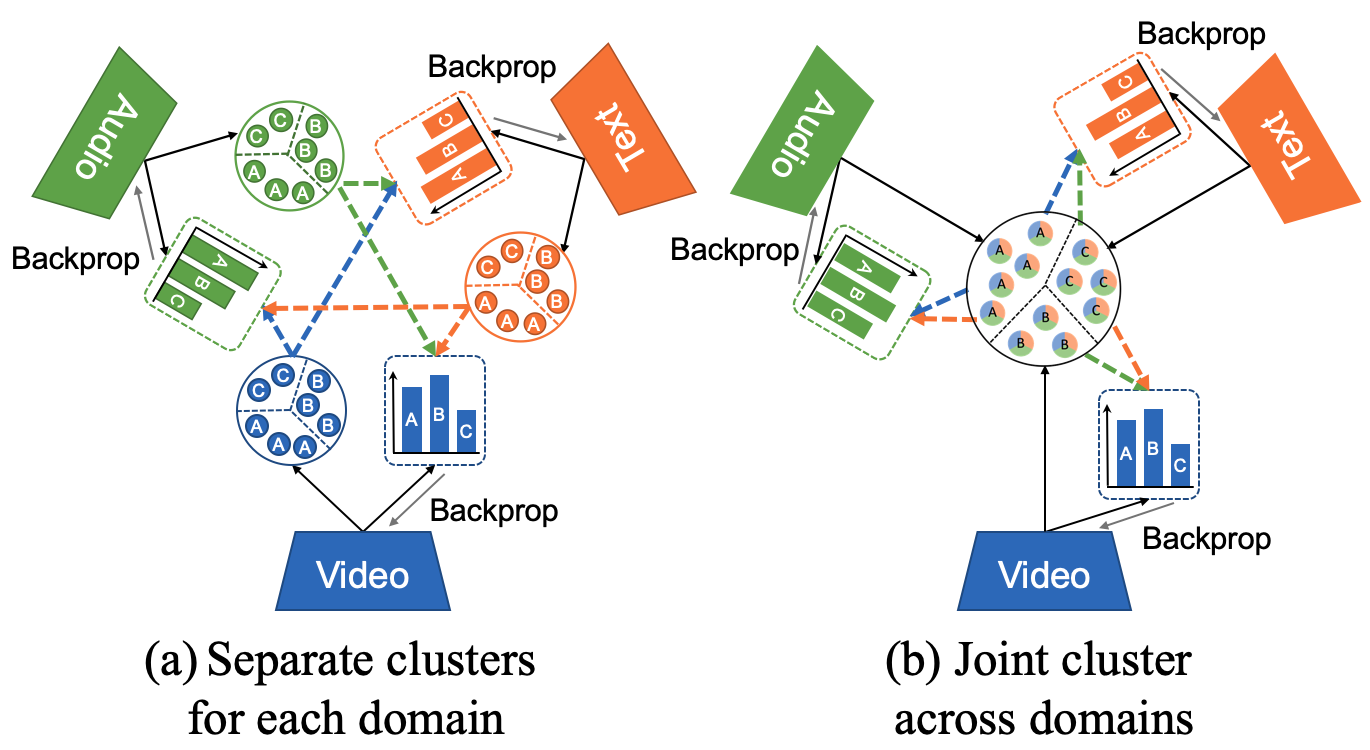

Multimodal Clustering Networks for Self-Supervised Learning from Unlabeled Videos Brian Chen, Andrew Rouditchenko, Kevin Duarte, Hilde Kuehne, Samuel Thomas, Angie Boggust, Rameswar Panda, Brian Kingsbury, Rogerio Feris, David Harwath, James Glass, Michael Picheny, Shih-Fu Chang International Conference on Computer Vision (ICCV) 2021 Paper |

|

|

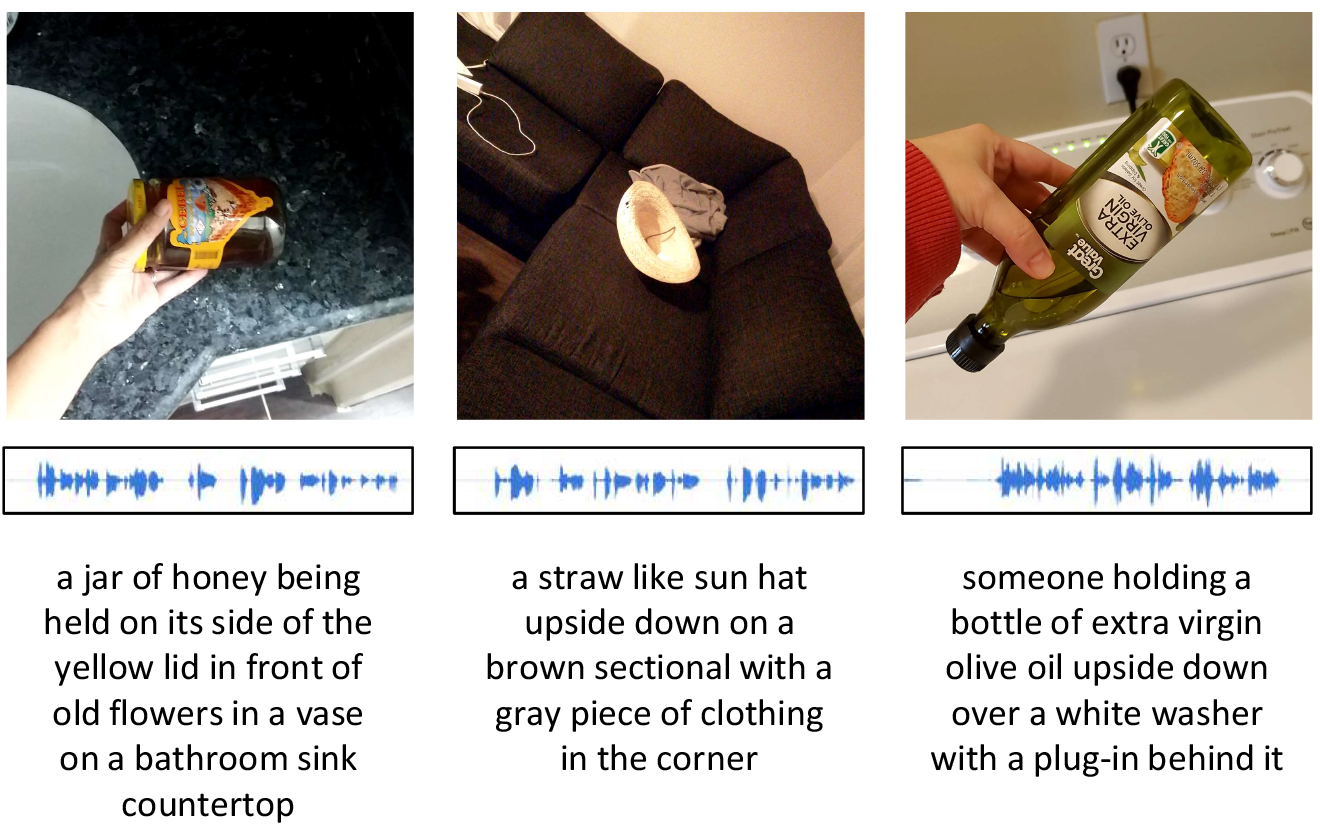

Spoken ObjectNet: A Bias-Controlled Spoken Caption Dataset Ian Palmer, Andrew Rouditchenko, Andrei Barbu, Boris Katz, James Glass Interspeech 2021 Project website with code and data Paper (the ArXiv version contains additional experiments on the test set) Video Presentation (YouTube) |

|

|

|

The Sound of Pixels Hang Zhao, Chuang Gan, Andrew Rouditchenko, Carl Vondrick, Josh McDermott, Antonio Torralba European Conference on Computer Vision (ECCV) 2018 Project website with code and dataset Paper MIT News article |

|

Accessibility. Website source code credit: Jon Barron.