|

|

Convex and Combinatorial Optimization for Dynamic Robots in the Real World Russ Tedrake |

|

|

|

|

|

|

|

My motivating applications

| Robots in the home |

|

|

Automous vehicles |

My goals for today

- Discuss some of the big questions

- Do home robots actually need "verification"? (or should be all be working on batteries, or sensors, or actuators ...)

- What are the relevant challenges/technologies for autonomous cars?

- Discuss what dynamic robots can and can't do well

- Describe the optimization problems we formulate and how we solve them

- Expose our "pain points"... and convince you to help!

- Organize around core problems:

- Perception, Planning, Feedback Design, Verification

"Hybrid Systems"

- Not just discrete decisions; also discrete/discontinuous dynamics (e.g. collision events and frictional contact).

- "Language" of hybrid systems (modes, guards, resets, ...) is sufficient...

- ... but often impoverished for synthesis/verification.

- Found happiness in e.g. measure-differential inclusions (MDIs) and mixed combinatorial-continuous optimization

"Hybrid Systems"

- Not just discrete decisions; also discrete/discontinuous dynamics (e.g. collision events and frictional contact).

- Constant theme of "exploiting structure" (especially between modes)

- Nonlinear dynamics: sparsity, convexity, algebraic structure.

- Combinatorial structure (even in the mechanics).

- Approaches: Submodularity/Matroid, SMT, MI-Convex, Convex relaxations, ...

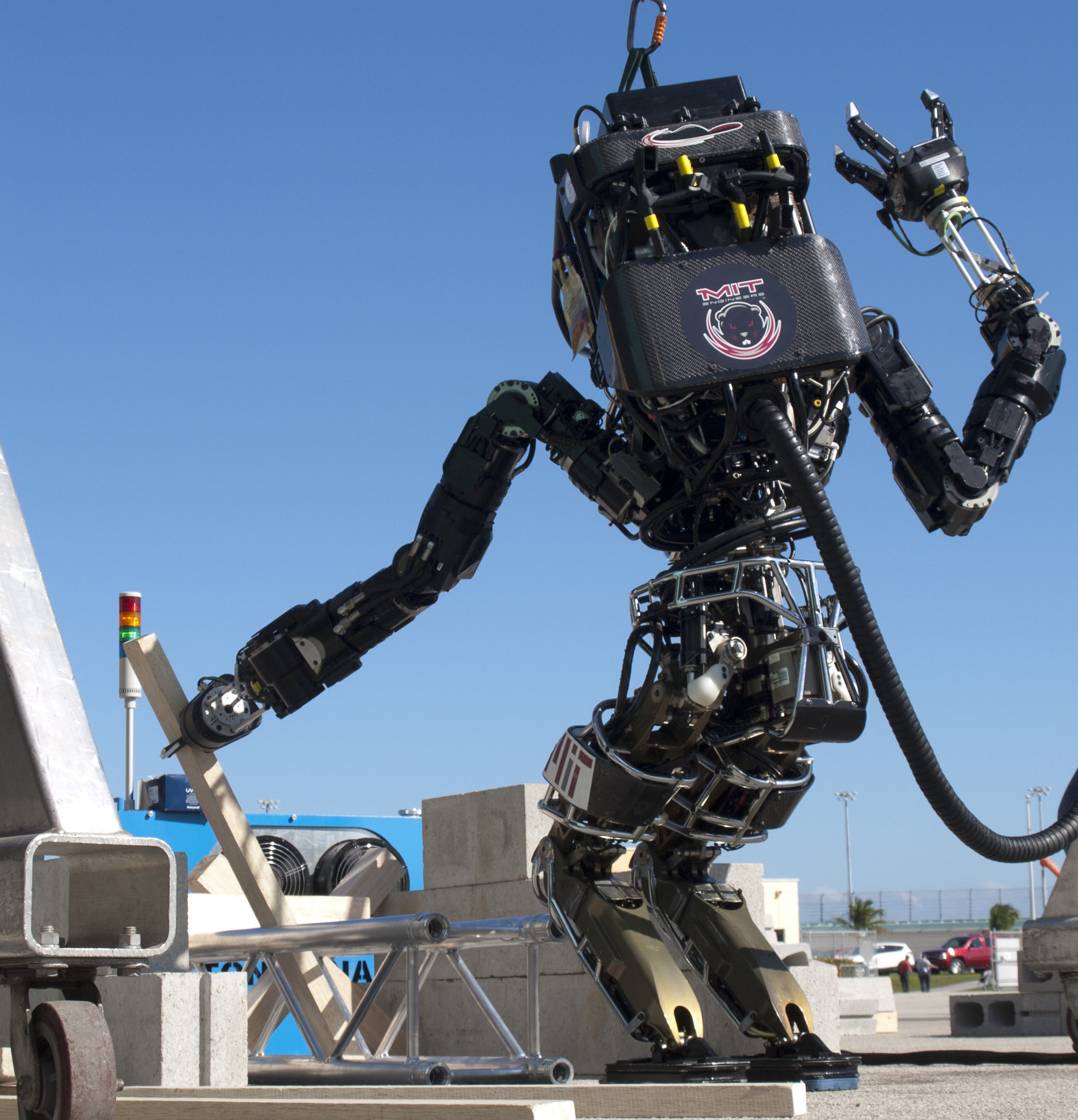

MIT's approach to the DARPA Robotics Challenge

Rules allowed a human operator, but with a degraded network connection

|

|

|

| Teleoperation | Complete Autonomy |

Our approach:

- Focus on autonomy.

- Human operator provides high-level ``clicks'' to seed (nearly) autonomous algorithms

- Almost everything the robot does is formulated as an optimization

- For me personally, a triumph for optimization-based synthesis

Trajectory Motion Planning

Simplest example: Inverse Kinematics

-

Joint positions: $q$

- end effector (rich constraint spec)

- joint limits

- collision avoidance

- "gaze constraints"

- feet stay put

- balance constraints (center of mass)

- ...

(and possibly "infeasible")

Big robot, little car

Kinematic trajectory planning

- Optimize $q(t)$ spline coefficients

- Add constraints through time

(points in contact don't move) - Solve with SQP

(or randomized planning) - Solves in seconds

Dynamic trajectory planning

For more dynamic motions have to add additional constraints ($F=ma$, actuator limits, friction cones, ...)

For smooth dynamical systems, trajectory optimization still works well

Dynamic trajectory planning

For more dynamic motions have to add additional constraints ($F=ma$, actuator limits, friction cones, ...)

For smooth dynamical systems, trajectory optimization still works well

Planning with Contact

- Contact (e.g. foot falls or grasping) adds "complementarity constraints"

- Add nonlinear constraint to SQP:

distance $\times$ force = 0

Planning with Contact

- Contact (e.g. foot falls or grasping) adds "complementarity constraints"

- Add nonlinear constraint to SQP:

distance $\times$ force = 0

- Plans take > 1 minute to compute

- ...and don't always succeed (local minima, numerical issues, ...)

- Did not use in the competition

absolutely awesome! but no planning here (yet)

There is real-world, online planning here:

but do you know why humanoids walk like this?

Constant center of mass (COM) height $\Rightarrow$

- No impact events

- Linear center of mass dynamics

- Standard recipe for humanoid walking:

- plan footsteps/contacts [heuristic]

- plan constant-height CoM (also zero angular momentum) [convex]

- My goal: reliable online planning that

- includes footsteps, contact

- removes CoM / momentum restrictions

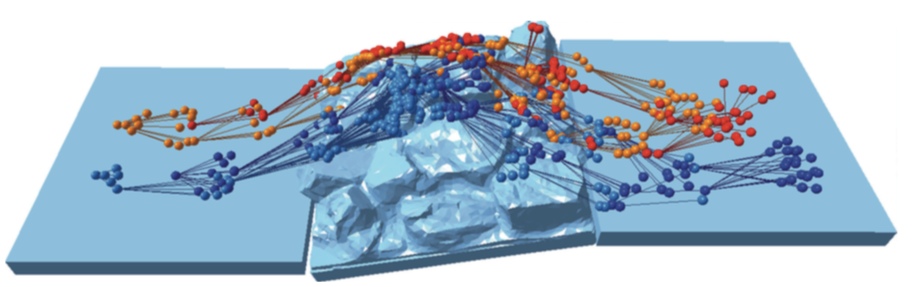

Footstep planning

|

Footsteps add a combinatorial aspect to the planning problem:

But there is a continuous portion, too

|

Planning on LittleDog (c. 2008)

Search-based footstep placement

- Chestnutt et al., Veranza et al., Winkler et al., Zucker et al.,...

-

Search over action graph of either

- Footsteps

- Body positions

- Discrete set of reachable footsteps

- No guarantees of completeness

- Adding dynamics made the search harder (more expensive checks)

First step: explicitly enumerate the regions

Mixed-integer convex optimization

- Assignment of contacts to regions adds an "integer constraint":

- Non-convex optimization (always).

- Worst-case complexity is awful. Brute force search.

- "Mixed-integer convex" iff relaxation (ignoring integer constraint) is convex

- Relaxation gives lower bounds $\Rightarrow$ effective branch-and-bound search

- Very efficient commercial solvers.

- return global optimality (to tolerance)

- or "infeasible"

More than a "hybrid system"

- Both AI-style search and "hybrid systems" model promote thinking about every mode as independent.

- Transcription to MI-Convex forces thinking about the relationship between modes.

- Here:

- Always $F=ma$,

- Non-zero force from a region when foot is in contact.

Adding more dynamics

- Still very conservative walking

- Integer variables enabled making/breaking contact

- General case needs to handle angular momentum (but non-convex)

|

\begin{align}

T = \mathbf{p} \times \mathbf{f} \\

= p_{z}f_{x} - p_{x}f_{z}\\

\end{align}

|

|

Momentum terms are non-convex

|

MI-Convex relaxations of bilinear terms

|

MI-Convex relaxations of bilinear terms

|

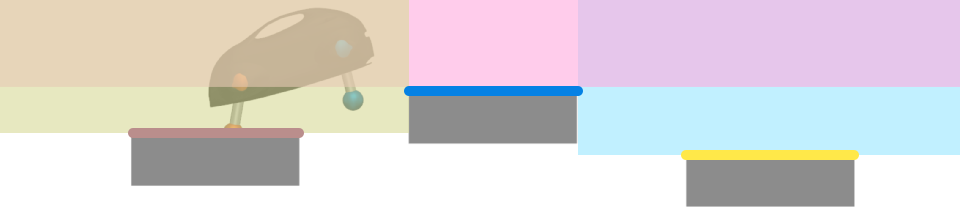

Application to a bounding planar quadruped

Application to a bounding planar quadruped

- Three contact regions (bold lines)

Application to a bounding planar quadruped

- Three contact regions (bold lines)

- Three (overlapping) free-space regions (shaded)

Application to a bounding planar quadruped

- Three contact regions (bold lines)

- Three (overlapping) free-space regions (shaded)

Application to a bounding planar quadruped

- Three contact regions (bold lines)

- Three (overlapping) free-space regions (shaded)

Application to a bounding planar quadruped

- Assignment to regions provides (convex) constraints for position and force

Application to a bounding planar quadruped

From MICP results to whole-body planning

- Optimization on simple models can seed non-convex optimization

Grasp optimization

- Optimize forces and

contact positions for

robustness - Non-convex from torque (cross product) + assignment of fingers to regions

- Bilinear Matrix Inequalities (BMIs), solved as SDP w/ rank-minimization

- Include kinematic and dynamic constraints (solves inverse kinematics, too)

|

|

Grasp optimization

- Optimize forces and

contact positions for

robustness - Non-convex from torque (cross product) + assignment of fingers to regions

- Bilinear Matrix Inequalities (BMIs), solved as SDP w/ rank-minimization

- Include kinematic and dynamic constraints (solves inverse kinematics, too)

Grasp optimization

- Find pose to maximize wrench disturbance given torque limits

Feedback Control

Example: Whole-Body Force Control

- Planners give desired CoM + end effector trajectories

- Solve differential Riccati equation for approximate CoM feedback

- One-step control Lyapunov function accounts for:

- whole-body inverse dynamics

- instantaneous contact constraints (incl. friction limits)

- torque limits

- results in a sparse QP

Whole-Body Force Control

- Balance control worked extremely well...

Whole-Body Force Control

- Balance control worked extremely well...

Whole-Body Force Control

- Balance control worked extremely well...

Whole-Body Force Control

- Balance control worked extremely well...

- ... except for the one time it didn't

What went wrong?

- First, a human-error

- Then tailbone hit the seat and feet came off the ground...

- No contact sensor in the butt

- Dynamics model very wrong

- State estimator very confused

- Controller is done!

This was a "corner case"

- Errant process sending commands to the ankle

- Particularly unfortunate ankle command

- When robot was most vulnerable

- Never saw this during testing

Ironically

- For flat terrain, we did work out good heuristics...

Ironically

- For flat terrain, we did work out good heuristics...

Another form of robustness

Feedback for Grasping

- Grasping was open-loop (no feedback)

Feedback for Grasping

- Grasping was open-loop (no feedback)

- Touch sensors / hand cameras were fragile and difficult to use

Feedback for Grasping

- Have been trying to extend grasp optimization to feedback design

- Adds sums-of-squares constraints

- Still numerically bad. No success here, yet.

|

|

Perception

Continuous walking with dense stereo

Perception for Walking

- In the competition, relied on lidar; local "fitting" via least squares was sufficient

Perception for multi-contact planning

Perception for multi-contact planning

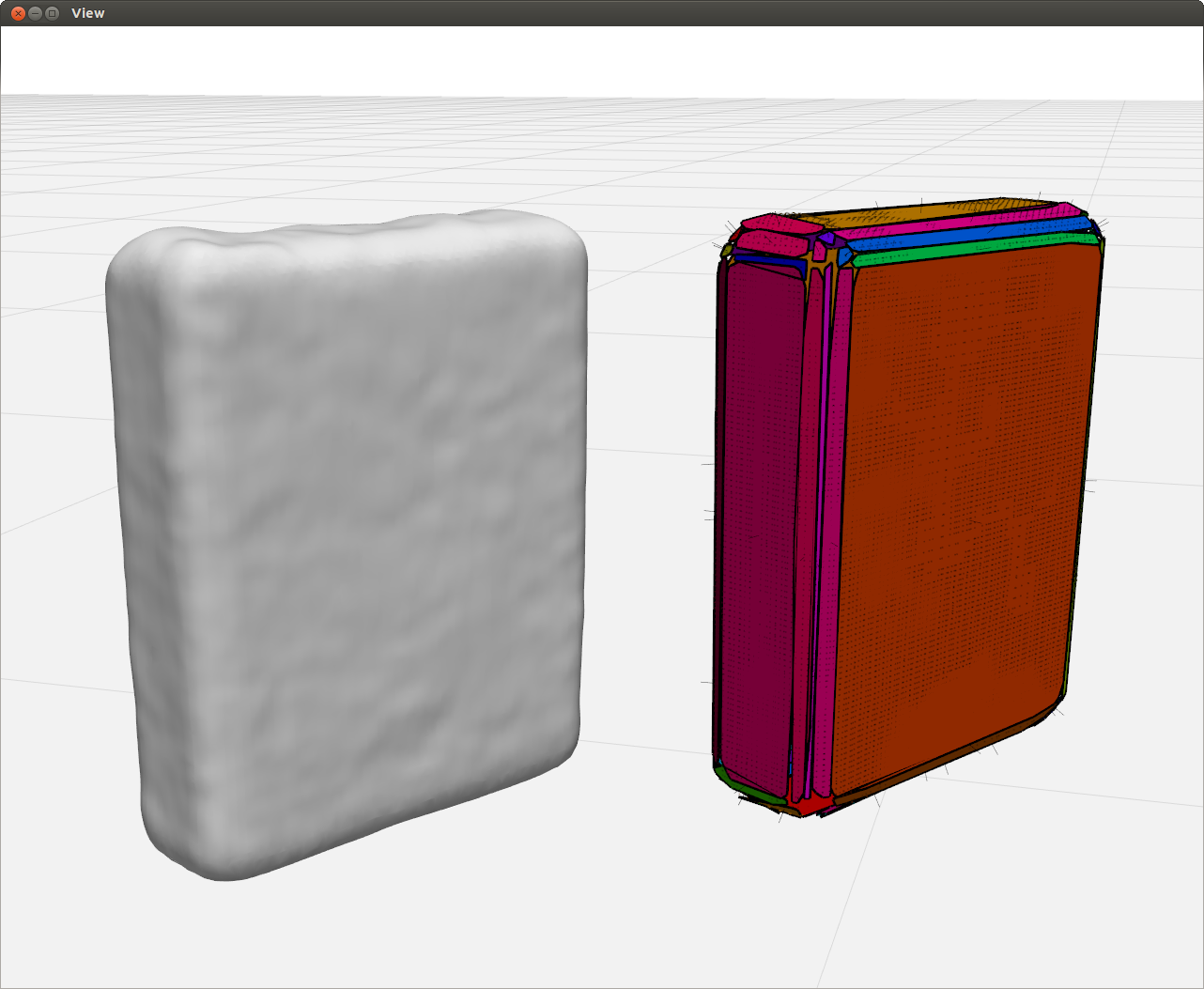

also manipulation of unknown objects

|

|

- In the DRC, we only touched known objects (cinderblocks, drill, ...)

- Fit CAD models to raw sensor data

Perception for Manipulation

- Avoided object recognition via a few clicks from the human

Perception for Manipulation

- Avoided object recognition via a few clicks from the human

Robust Synthesis/Verification

- Two (related) ideas:

- Reduce sensitivity of components to expected disturbances (e.g. $L_2$-gain minimization, robust control).

- System-level search for logical corner cases (e.g. formal methods).

complex robot dynamics

(dynamics of vehicles are simpler than humanoids)

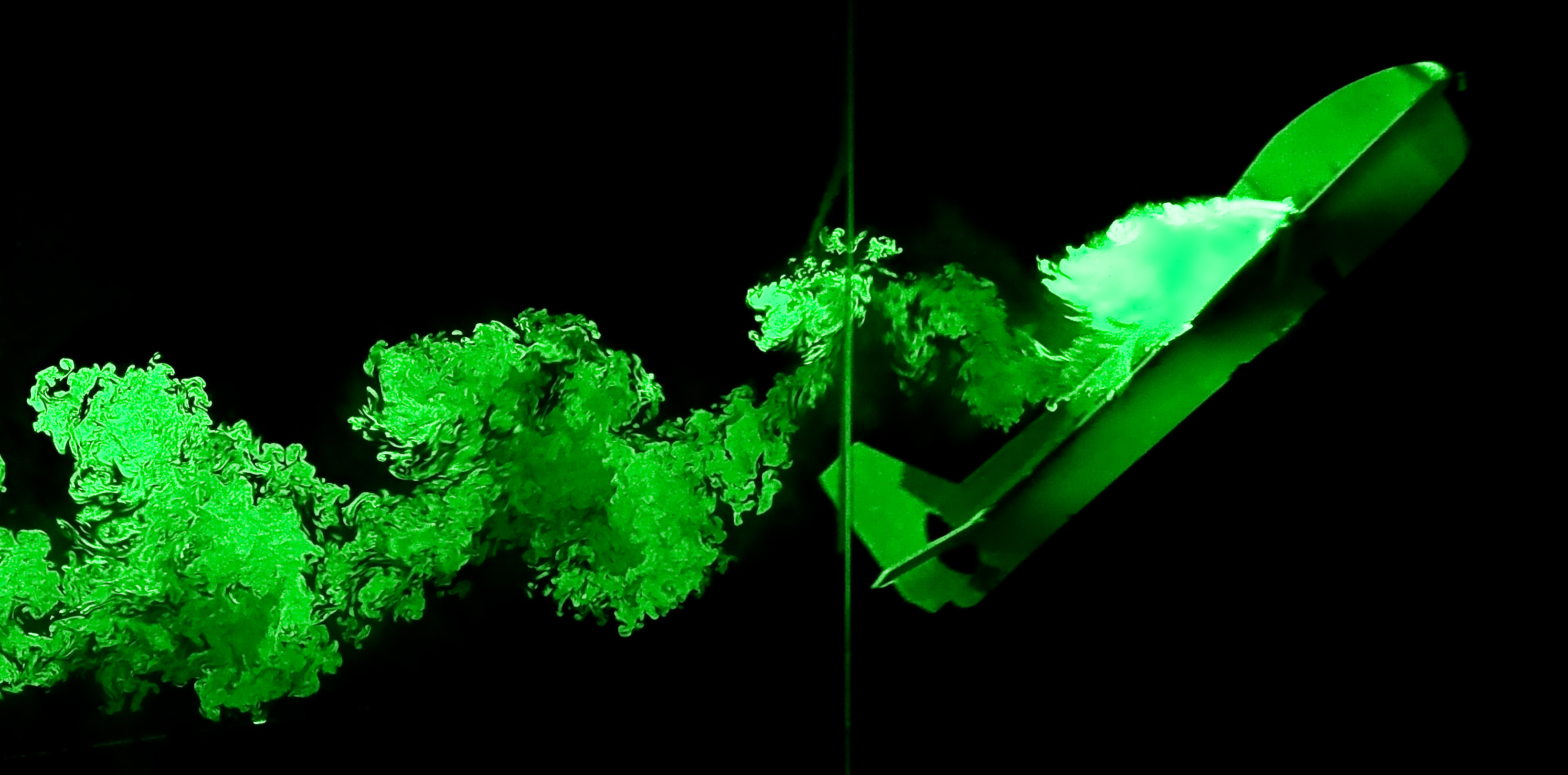

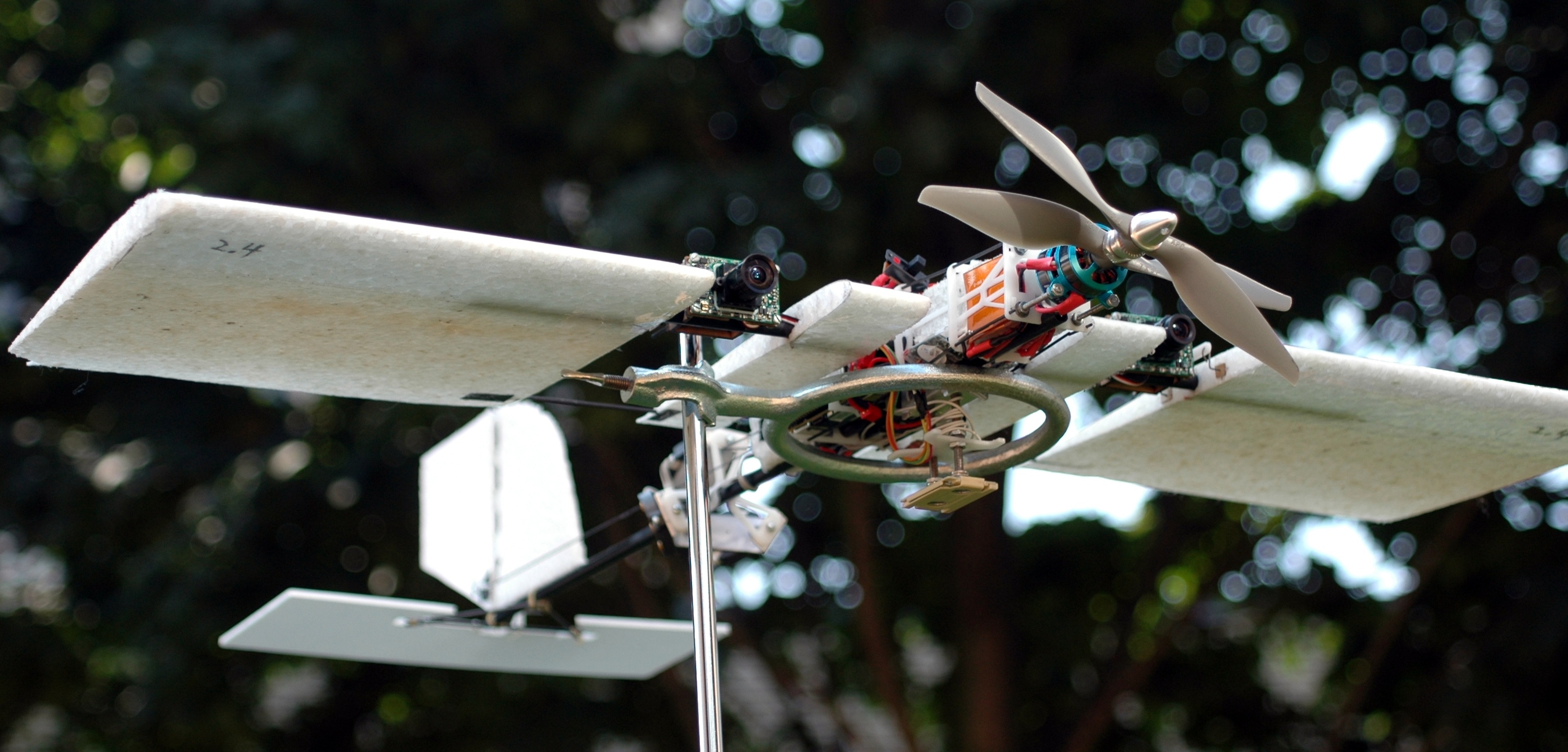

Example: An airplane that can land on a perch

Can we make a control system for a fixed-wing airplane to land on a perch like a bird?

Glider Perching Experiment

|

|

Robust planning and control

-

Key ingredient: Reason algebraically about

nonlinear system dynamics

- Motion planning focuses on a single trajectory (one solution)

- Robust analysis reasons about all possible solutions

Analysis by searching for a Lyapunov function.

Computing Lyapunov functions

- Historically difficult to find Lyapunov functions

- First observation:

- Second observation: The dynamics of my robots are (rational) polynomial

- "Sums-of-Squares" (SOS) optimization can approximate positivity as a semidefinite constraint

- Verifying a candidate Lyapunov function is a convex optimization

- Robust control design using Lyapunov functions is a bilinear optimization

Verifying Lyapunov conditions requires checking positivity of functions $(V(x)>0, \dot{V}(x) < 0)$

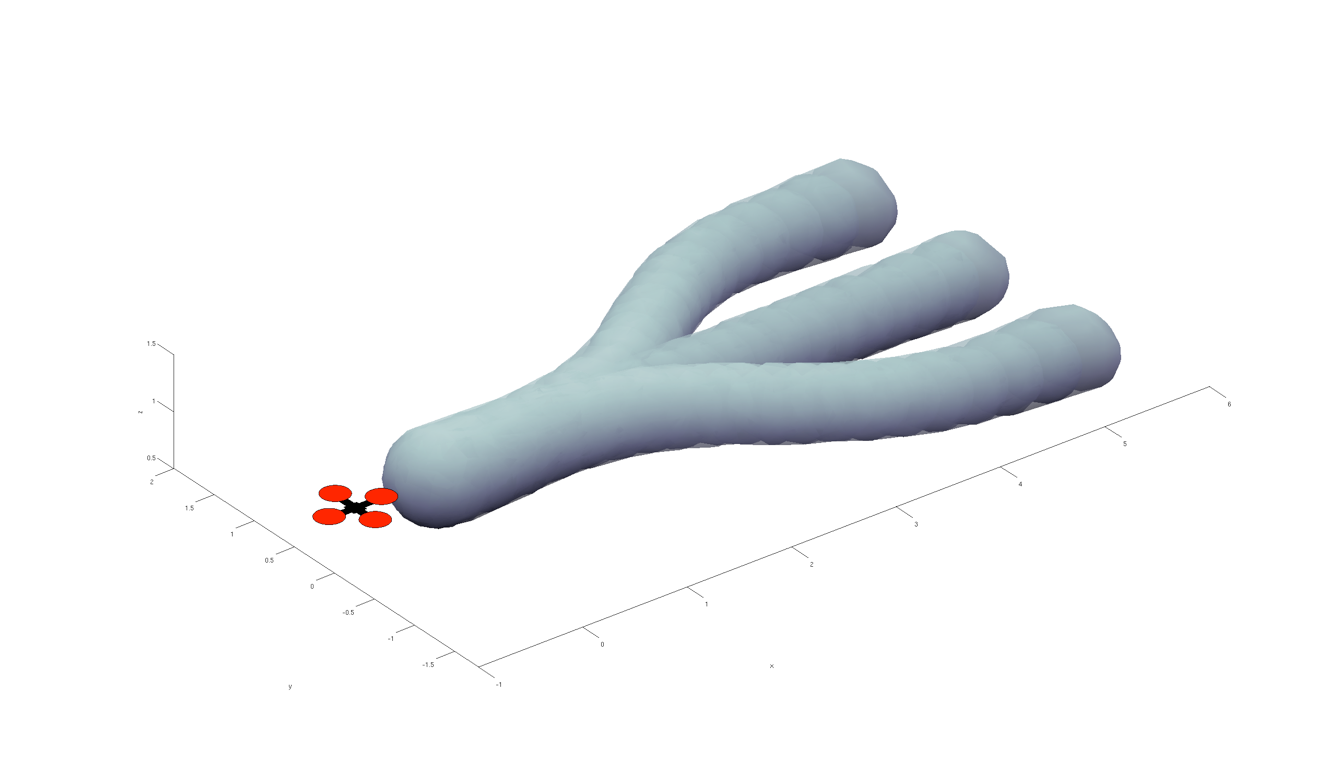

Example: Region of attraction for a Quadrotor

|

|

Lyapunov verification of (aggressive) motion plans

- 7+ dimensional state space

- Exhaustive evaluation is impossible

Flying through forests

Flying through forests

Real-time planning w/ funnels

- Real-time Sums-of-Squares?

- Precompute library of parameterized funnels

- Shift/compose funnels at runtime

What if obstacles aren't known until runtime?

|

|

Outdoor experiments using 120 fps onboard vision

|

|

|

- Logistics: corner cases mean human intervention, loss of efficiency

- Home robots: "artificial stupidity" will limit consumers' appetite

- Autonomous vehicles: corner cases will cost human lives

But why do robots really need "verification"?

To accelerate the pace of innovation.

It takes time to find the corner cases

source: A Statistical Analysis of Commercial Aviation Accidents 1958-2015, Airbus.

- Challenge: Increasing system complexity

- Sensors (camera, lidar, radar, projected light...)

- Dynamics (contact mechanics, tire models, ...)

- Software (perception, planning, learning in the loop)

- Complexity of the environment (with humans)

- How do you provide test coverage of every home in the U.S.?

- ... and every possible human interaction?

Data is precious

I'm taking a different approach

- Open-source C++ toolbox for model-based optimization (http://drake.mit.edu)

- Simulate very complex dynamics, sensors, ... plus tools for

- System identification

- Runtime monitoring / anomaly detection

- Active experiment design

- Built for optimization-based design/analysis

- Cloud simulation (e.g. for black-box approaches)

- Provides analytical gradients, sparsity, polynomials, ...

- Bindings to MATLAB, Python, Julia, ...

- Clear separation between problem formulations and solvers

- Toyota Research Institute + other industry investment

- Very interested in robustness / verification

- Immediate applications

Rigorous Simulation

Rigorous Simulation (on the cloud)

Specific technical challenges

- Robust design/analysis through contact

- Current controllers have "obvious" deficiencies w/ respect to contact uncertainty.

- Robust perception

- Really feedback systems with perception in the loop.

- Don't even have the robustness metric yet (e.g. input-output gain less important than outlier rejection)

- Robust design/analysis w/ humans in the loop.

- Modeling is the key challenge.

Robustness design/analysis through contact

Combinatorial number of contact conditions

Robust synthesis/analysis through contact

Robust design/analysis with humans in the loop

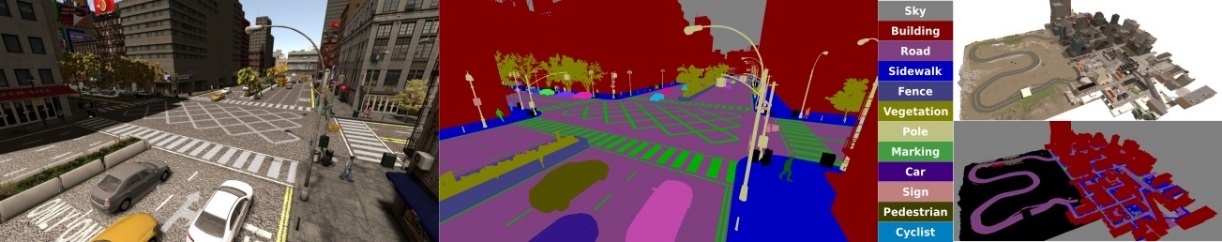

Building large-scale traffic models (+ sensor models) in drake.

|

Work with Jon DeCastro and Soonho Kong (in the audience).

Emerging themes:

- Falsification, not verification

- Falsification alone is too easy (adversarial vehicles will get you).

- Need good models, return highest probability failures until satisfied

Summary

- Planning

- Mostly nonlinear optimization

- New results getting more dynamics into mi-convex formulations

- (Low-level) Perception

- Was about appearance. Now about geometry.

- Mostly simple (unconstrained) optimization, with randomized restarts

- Essentially no guarantees. Still not robust

- Feedback/Verification

- One-step control-Lyapunov as a QP, effective but limited

- SOS verification/synthesis works well for smooth systems, even aggressive motion

- SOS through contact still numerically difficult

Summary

model-based design/analysis to accelerate pace of iteration.

falsification can give value immediately.

Summary

in a language that can expose their structure

with SMT, SDP, submodular/matroid methods, mi-convex, ...).

For more information

- Software available at: http://drake.mit.edu

- Online course (edX): http://tiny.cc/mitx-underactuated

|

|