| Michael Rubinstein1,2 | Ce Liu2 |

| 1MIT CSAIL | 2Microsoft Research |

|

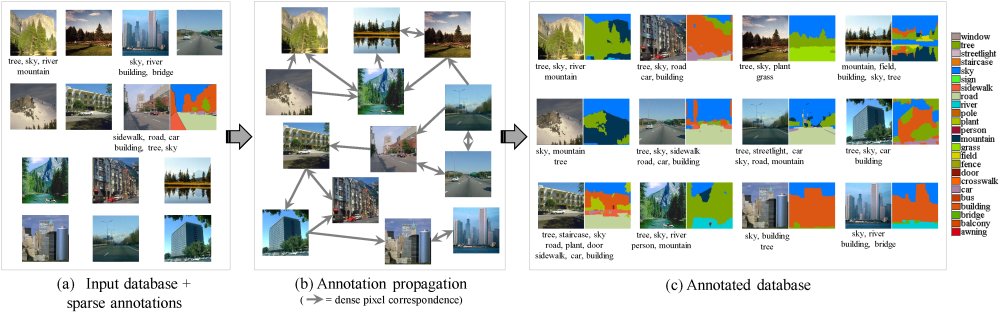

| Input and output of our system. (a) The input image database, with scarce image tags and scarcer pixel labels. (b) Annotation propagation over the image graph, connecting similar images and corresponding pixels. (c) The fully annotated database by our system. |

Abstract

Our goal is to automatically annotate many images with a set of word tags and a pixel-wise map showing where each word tag occurs. Most previous approaches rely on a corpus of training images where each pixel is labeled. However, for large image databases, pixel labels are expensive to obtain and are often unavailable. Furthermore, when classifying multiple images, each image is typically solved for independently, which often results in inconsistent annotations across similar images. In this work, we incorporate dense image correspondence into the annotation model, allowing us to make do with significantly less labeled data and to resolve ambiguities by propagating inferred annotations from images with strong local visual evidence to images with weaker local evidence. We establish a large graphical model spanning all labeled and unlabeled images, then solve it to infer annotations, enforcing consistent annotations over similar visual patterns. Our model is optimized by efficient belief propagation algorithms embedded in an expectation-maximization (EM) scheme. Extensive experiments are conducted to evaluate the performance on several standard large-scale image datasets, showing that the proposed framework outperforms state-of-the-art methods.

@article{Rubinstein12Annotation, author = {Michael Rubinstein and Ce Liu and William T. Freeman}, title = {Annotation Propagation in Large Image Databases via Dense Image Correspondence}, booktitle = {European Conference on Computer Vision (ECCV)}, year = {2012}, pages = {85--99}, publisher = {Springer} }

Paper: PDF

Supplemental: PDF

ECCV'12 Poster: PDF (60mb)

Last updated: Jun 2013