My research combines computation with the intelligence of crowds—large groups of people connecting and coordinating online—to create hybrid human-computer systems. Combining machine and crowd intelligence opens up a broad new class of software systems that can solve problems neither could solve alone. However, while crowds are increasingly adept at straightforward parallel tasks, they struggle to accomplish many goals: participants vary in quality, well-intentioned contributions can introduce errors, and future participants amplify and propagate those errors. Moreover, crowds that can generate the necessary information might not even exist yet, or their knowledge might be distributed across the web.

My work in human-computer interaction develops computational techniques to overcome these limits in crowdsourcing. These solutions adopt a broad view of how we might integrate crowds and computation: 1) embedding crowd work into interactive systems, 2) creating new crowds by designing social computing systems, and 3) mining crowd data for interactive applications.

Crowd-Powered Systems

I create systems that are powered by crowd intelligence: these systems advance crowdsourcing from a batch platform to one that is interactive and realtime. To embed crowds as first-order building blocks in software, we need to address problems in crowd work quality and latency. This research develops computational techniques that decompose complex tasks into simpler, verifiable steps, return results in seconds, and open up a new design space of interactive systems.

To instantiate these ideas, I developed a crowd-powered word processor called Soylent that uses paid micro-contributions to aid writing tasks such as text shortening and proofreading. Using Soylent is like having an entire editorial staff available as you write. For example, crowds shorten the user's writing by finding wordy text and offering alternatives that the user might not have considered. The user selects from these alternatives using a slider that specifies the desired text length. On average, Soylent can shorten text up to 85% of its original length. By using tighter wording rather than wholesale cuts, the system shortens a ten-page draft by a page and a half in only a few minutes. Soylent also offers human-powered proofreading and natural-language macros.

Bernstein, M., Little, G., Miller, R.C., et al. Soylent: A Word Processor with a Crowd Inside. In Proc. UIST 2010. ACM Press. best student paper award. [PDF] [Video] [Software] [Slides]

To improve Soylent's crowd work quality, I developed a design pattern called Find-Fix-Verify that guides workers through open-ended editing processes. Traditional approaches that ask workers on Mechanical Turk to shorten or proofread text do not work: lazy workers all focus on the simplest problem and overeager workers introduce undesired changes. Find-Fix-Verify splits complex tasks into creation and review stages that use independent agreement to produce reliable results.

Many crowd-powered systems need responses in seconds, not minutes. For example, digital camera users want to preview their photos immediately, before typical crowds could finish any work. In response, I developed Adrenaline, which can recruit workers in two seconds and execute large searches in ten seconds. This realtime crowdsourcing can complete typical tasks such as votes in just five seconds, and can also power complex interactive systems such as digital cameras. An Adrenaline camera captures a short video instead of one frame, then uses the crowd to decide on the best moment. This camera can identify the best smile, catch subjects in mid-air jumps, and decide on the best angle available, all in seconds.

Bernstein, M., Brandt, J., Miller, R., and Karger, D. Crowds in Two Seconds: Enabling Realtime Crowd-Powered Interfaces. In Proc. UIST 2011. ACM Press. [PDF] [Video] [Software] [Slides]

Adrenaline's recruitment approach, called the retainer model, pays workers a small wage to be on call and respond quickly when asked. Its insight is that algorithms can guide realtime crowds to complete tasks more quickly than even the fastest individual crowd member. The Adrenaline camera thus focuses workers' attention on areas where they are likely to agree, reducing a large search space to a final answer in a few seconds.

Creating Crowds: The Design of Social Systems

When existing crowds are not appropriate for the goal, I design social systems that create new crowds. I pair these designs with studies of online communities.

I create crowds that collect information unknown to most people. For example, I introduced the concept of friendsourcing, which collects information known only to members of a social network. Collabio, my friendsourced person-tagging application, gathered over 29,000 friend-authored tags on thousands of individuals on Facebook. Evaluations demonstrated that the vast majority of this information is not available elsewhere on the web. Collabio tags have already been used to create personalized news feeds, question-routing systems, and social network exploration tools.

Bernstein, M., Tan, D., Smith, G., Czerwinski, M., and Horvitz. E. Personalization via Friendsourcing. ACM TOCHI 17(2). 2010. [PDF] [Video] [Software] [Slides]

Friendsourcing applications can also amplify existing social interactions. I developed a friendsourced blog reader called FeedMe that helps active web readers share interesting content, then observes those shares to learn recipient interest models and improve its sharing recommendations. In effect, FeedMe is a social collaborative filtering engine. Other researchers have also applied friendsourcing for applications such as adding edges to social network graphs.

I complement these systems with empirical work studying existing crowds and communities. For example, researchers and practitioners often assume that strong user identity and permanent archives are critical to online communities. However, communities like 4chan /b/ attract millions of users without identity systems or archives. Our investigation of five million posts on /b/ was the first large-scale analysis of an anonymous, ephemeral social system. We found that /b/ moves incredibly quickly: the median thread spends just five seconds on the first page and less than five minutes on the site before new content replaces it. Over 90% of posts are made by fully anonymous users, with other identity signals adopted and discarded at will. The lack of user identity and archives produces a fast-churning ecosystem that encourages experimentation and likely plays a strong role in /b/’s ability to create well-known memes and drive internet culture.

Bernstein, M., Monroy-Hernández, A., Harry, D., et al. 4chan and /b/: An Analysis of Anonymity and Ephemerality in a Large Online Community. AAAI ICWSM. 2011. best paper award. [PDF] [Talk] [Slides]

Interactive Crowd Data

When crowds browse the web or use social media, they leave activity traces that can be mined for interactive applications. I build user experiences that utilize this data, as well as tools that allow end users to directly explore it. These goals often create opportunities for algorithms in data mining and information retrieval.

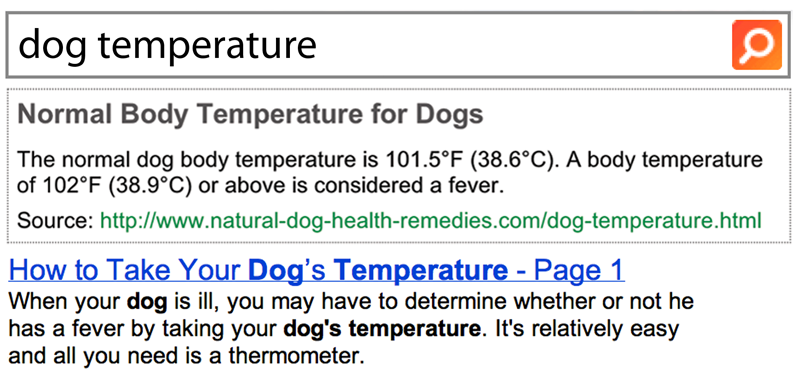

Query logs offer an opportunity to rethink not just search ranking, but the entire search user experience. Inspired by this goal, I created Tail Answers, which return direct results for thousands of information needs like conference submission deadlines, the average body temperature for dogs, and molasses substitutes. I mine search logs to find common session endpoints for informational queries, then use paid crowds to extract the answer from endpoint webpages. Tail Answers significantly improve subjective ratings of search quality and users’ ability to solve their information needs without clicking on a result.

Bernstein, M., Teevan, J., Liebling, D., Dumais, S., and Horvitz, E. Direct results for search queries in the long tail. ACM CHI. 2012.

By analyzing social data feeds, applications can also help users make sense of their network and unfolding events. I developed a Twitter topic browser called Eddi that introduces a web search technique for mining open-world topics out of microblog updates. Using this TweeTopic algorithm, Eddi users can filter and explore their feed via implicit topics like research, c++ or a favorite celebrity. TweeTopic outperforms existing topic modeling approaches for Twitter browsing and the interface doubles users’ ability to find worthwhile content.

Bernstein, M., Suh, B., Hong, L., Chen, J., Kairam, S., Chi, E.H. Eddi: Interactive Topic-Based Browsing of Social Status Streams. ACM UIST. 2010. [PDF] [Video] [Slides]