- Generic Object Recognition Through Visual Cue Decomposition and Adaptive Combination

Overview

The goal of this work is to build a computational system that is able to identify

object categories within images. To this end, this work proposes a computational

model of "recognition-through-decomposition-and-fusion" based on the psychophysical theories of information dissociation and integration in human visual perception.

At the lowest level, contour and texture processes are defined and measured. In the

mid-level, a novel coupled Conditional Random Field model is proposed to model and

decompose the contour and texture processes in natural images. Various matching

schemes are introduced to match the decomposed contour and texture channels in a

dissociative manner. As a counterpart to the integrative process in the human visual

system, adaptive combination is applied to fuse the perception in the decomposed

contour and texture channels.

The proposed coupled Conditional Random Field model is shown to be an important extension of popular single-layer Random Field models for modeling image

processes, by dedicating a separate layer of random field grid to each individual image process and capturing the distinct properties of multiple visual processes. The

decomposition enables the system to fully leverage each decomposed visual stimulus

to its full potential in discriminating different object classes. Adaptive combination

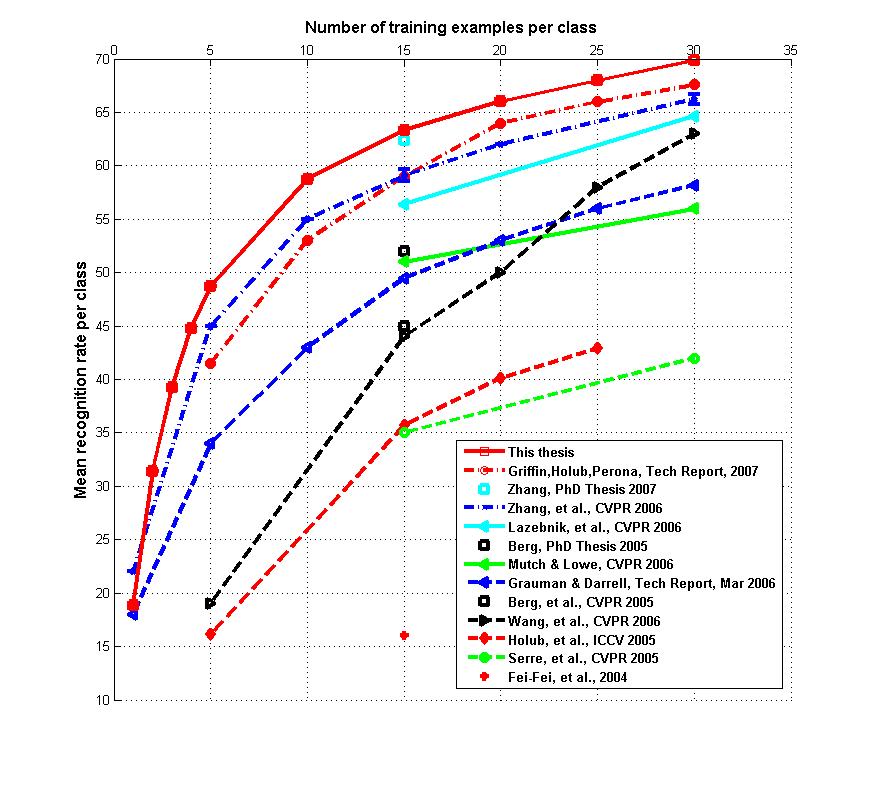

of multiple visual cues well mirrors the fact that different visual cues play different roles in distinguishing various object classes. Experimental results demonstrate

that the proposed computational model of "recognition-through-decomposition-and-fusion" achieves better performance than most of the state-of-the-art methods in recognizing the objects in Caltech-101, especially when only a limited number of training

samples are available, which conforms with the capability of learning to recognize a

class of objects from a few sample images in the human visual system.

Publications

X. Ma and W. Eric L. Grimson.Learning Coupled Conditional Random Field for Image Decomposition with Application on Object Categorization.

To appear, Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) , 2008

Learning Coupled Conditional Random Field for Image Decomposition: Theory and Application in Object Categorization