| Tianfan Xue1 | Andrew Owen2 | Daniel Scharstein3 | Michael Goesele4 | Richard Szeliski5 |

| 1MIT CSAIL 2University of California, Berkeley 3Middlebury College 4Technische Universität Darmstadt 5Facebook |

We present a multi-frame narrow-baseline stereo matching algorithm based on extracting and matching edges across multiple frames. Edge matching allows us to focus on the important features at the very beginning, and deal with occlusion boundaries as well as untextured regions. Given the initial sparse matches, we fit overlapping local planes to form a coarse, over-complete representation of the scene. After breaking up the reference image in our sequence into superpixels, we perform a Markov random field optimization to assign each superpixel to one of the plane hypotheses. Finally, we refine our continuous depth map estimate using a piecewise-continuous variational optimization. Our approach successfully deals with depth discontinuities, occlusions, and large textureless regions, while also producing detailed and accurate depth maps. We show that our method out-performs competing methods on high-resolution multi-frame stereo benchmarks and is well-suited for view interpolation applications.

@article{xue2019multistereo, title={Multi-frame stereo matching with edges, planes, and superpixels}, author={Xue, Tianfan and Owens, Andrew and Scharstein, Daniel and Goesele, Michael and Szeliski, Richard}, journal={Image and Vision Computing}, volume={91}, year={2019}, publisher={Elsevier} }

Downloads: PDF

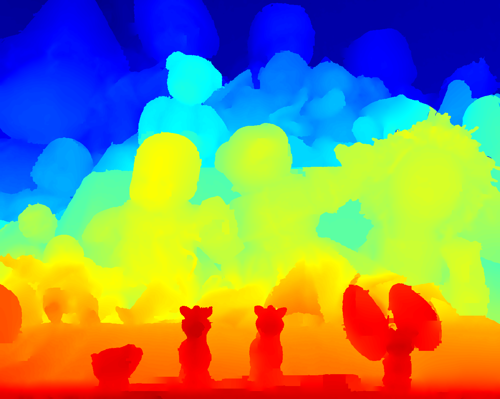

Please mouse-over or click on the labels beneath each image to switch between them (it might take 1-2 seconds to load an image since the images are very large). Two corresponding close-up views are shown besides each sequence.

Legend:

- Captured: the actual image captured at that view, which serves as the ground truth

- Interp using SGM depth: interpolated image using the depth map recovered by SGM.

- Interp using our depth: interpolated image using the depth map recovered by our technique

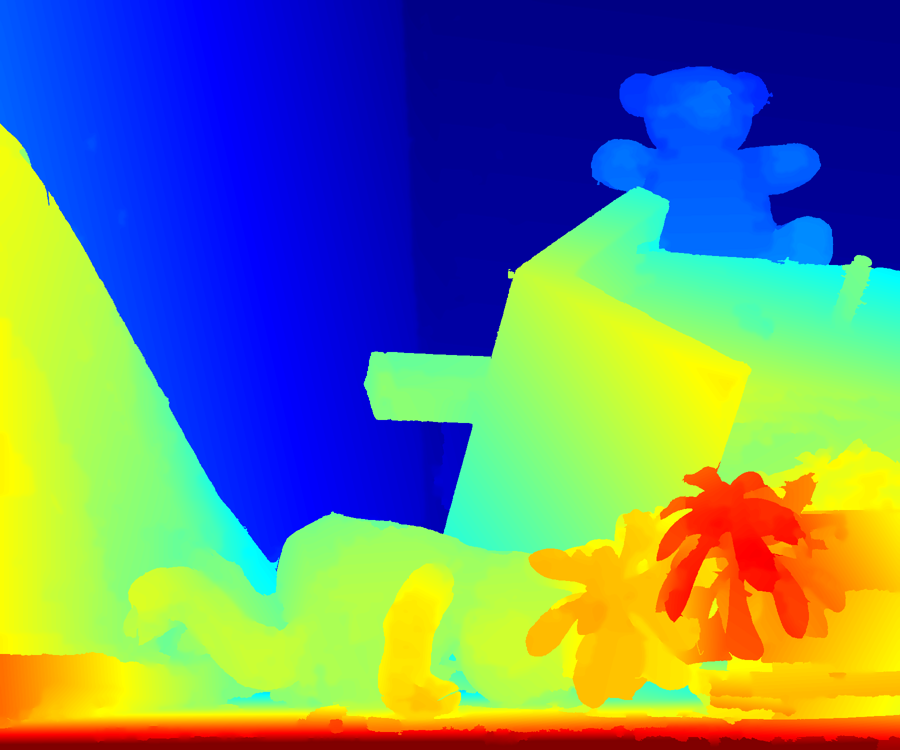

Teddy

|

|

In the interpolated view using SGM depth, some background pixels near depth boundaries are missing. For example, both the digit 2 in the top patch and the digit 4 in the bottom patch are missing (compare SGM with the ground truth). This is because the depth at these regions is incorrect due to foreground fattening. Such errors do not exist in our result.

Disney-Mansion

|

|

In the interpolated view using depth calculated by SGM there are "halos" around the leaves (top patch) or the spike (bottom patch) due to foreground fattening, while the boundary of these objects is much cleaner in our result.

Below we show synthesized videos using our depth maps. The first and the last frame of each sequence are used as input and the remaining frames are generated by view interpolation.Teddy

Disney-Mansion

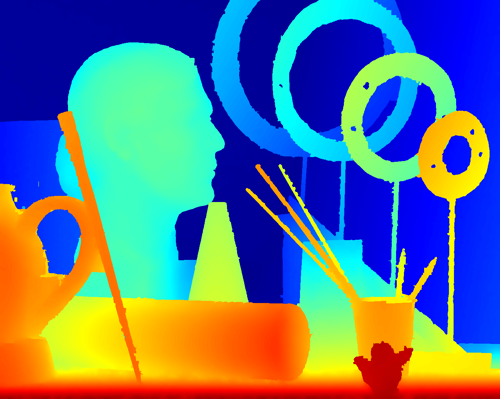

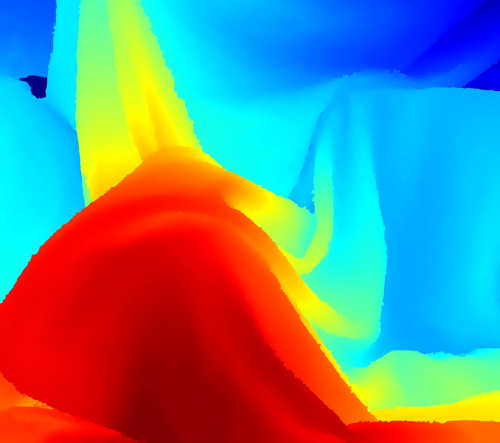

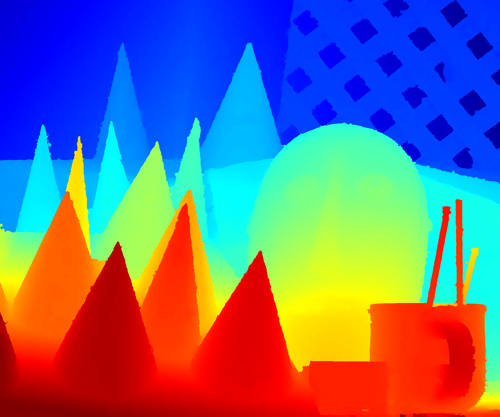

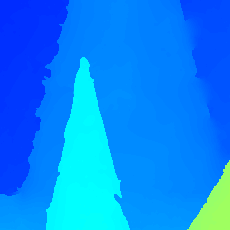

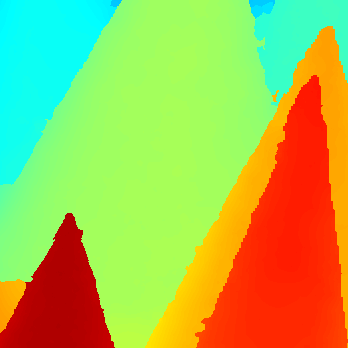

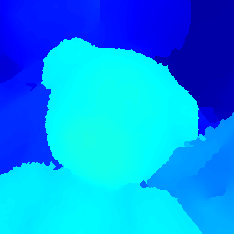

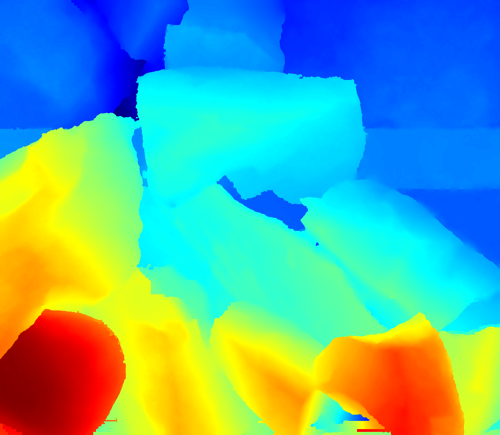

Back to top 2. Results on Midd-F Back to top We provide depth maps recovered by our technique on Midd-F, along with intermediate results (depth of edges and depth of patches), and a comparison with SGM. Please mouse over or click on the labels beneath each image to switch between them (it might take 1-2 seconds to load an image since the images are very large). Two corresponding close-up views are shown besides each sequence.

Legend:

- Our depth: the estimated depth map by our algorithm

- SGM depth: the estimated depth map by the SGM algorithm

- Our error, SGM error: black regions are errors in non-occluded regions, and gray regions are errors in occluded regions (threshold=2.0).

- Edge depth: sparse depth map of edges by edge matching.

- Patch depth: depth of derived overlapping slanted planes from edges. For all the sequences, the patch size is 32x32. But since different sequences have different size, the size of the patches may look different.

Aloe

Our depth, error rate=3.08

| Art

Our depth, error rate=4.28 In the SGM depth, the leaf shown in the top patch is thicker than it should be, and there are also errors between two leaves shown in the bottom patches. These errors do not exist in our depth map. Most errors in our depth occur at the leaf protruding towards the viewer and along other untextured leaft regions, in which few edges were detected (see edge depth). The boundaries of the three pens shown in the top patch are mostly accurate in our depth map, but are thicker than the ground truth in the SGM depth map. Our algorithm also produces fewer errors on the background (see both patches).

|