|

|

I am a fifth-year PhD student at MIT. My research focus is primarily in surface appearance modeling. More specifically, I am interested in material acquisition, modeling and validation. My advisor is Frédo Durand. I worked on an image-based scanning system based on the opacity hull for my master thesis. We extended the image-based visual hull algorithm to handle continuous opacity, and interpolate between views during rendering. The work is also extended to handle transparent and refractive objects using environment matting techniques. During undergraduate at Princeton University, I worked on a 3D model sketching system with a gestural interface (Princeton Graphics). And before that, I was involved in a project on acoustic modeling using beam tracing techniques.

Current/Past Collaborators/Advisors: Frédo Durand, Wojciech Matusik, Leonard McMillan, Hanspeter Pfister, Tim Weyrich, Remo Ziegler, Paul Beardsley, Adam Finkelstein, Lee Markosian, Lena Petrović, Thomas Funkhouser, Nicholas Tsingos, Ingrid Carlbom. Publications/Patents:

|

|

Acquisition and Modeling of Material Appearance1. Addy Ngan. Acquisition and Modeling of Material Appearance. Ph.D. Dissertation, Massachusetts Institute of Technology, 2006. [PDF]

|

|

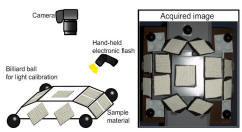

Statistical Acquisition of Texture AppearanceWe propose a simple method to acquire and reconstruct material appearance with sparsely sampled data. Our technique renders elaborate view- and light-dependent effects and faithfully reproduces materials such as fabrics and knitwears. Our approach uses sparse measurements to reconstruct a full six-dimensional Bidirectional Texture Function (BTF). Our reconstruction only require input images from the top view to be registered, which is easy to achieve with a fixed camera setup. Bidirectional properties are acquired from a sparse set of viewing directions through image statistics and therefore precise registrations for these views are unnecessary. Our technique is based on multi-scale histograms of image pyramids. The full BTF is generated by matching the corresponding pyramid histograms to interpolated top-view images. We show that the use of multi-scale image statistics achieves a visually plausible appearance. However, our technique does not fully capture sharp specularities or the geometric aspects of parallax. Nonetheless, a large class of materials can be reproduced well with our technique, and our statistical characterization enables acquisition of such materials efficiently using a simple setup. 1. Addy Ngan and Frédo Durand. Statistical Acquisition of Texture Appearance. To appear, Eurographics Symposium on Rendering 2006. [preprint PDF]

|

|

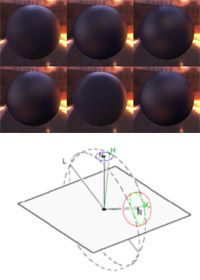

Image-driven Navigation of Analytical BRDF ModelsSpecifying parameters of analytic BRDF models is a difficult task as these parameters are often not intuitive for artists and their effect on appearance can be non-uniform. Ideally, a given step in the parameter space should produce a predictable and perceptually-uniform change in the rendered image. Systems that employ psychophysics have produced important advances in this direction; however, the requirement of user studies limits scalability of these approaches. In this work, we propose a new and intuitive method for designing material appearance. First, we define a computational metric between BRDFs that is based on rendered images of a scene under natural illumination. We show that our metric produces results that agree with previous perceptual studies. Next, we propose a user interface that allows for navigation in the remapped parameter space of a given BRDF model. For the current settings of the BRDF parameters, we display a choice of variations corresponding to uniform steps according to our metric, in the various parameter directions. In addition to the parametric navigation for a single model, we also support neighborhood navigation in the space of all models. By clustering a large number of neighbors and removing neighbors that are close to the current model, the user can easily visualize the alternate effects that can only be expressed with other models. We show that our interface is simple and intuitive. Furthermore, visual navigation in the BRDF space both in the local model and the union space is an effective way for reflectance design. 1. Addy Ngan, Frédo Durand, Wojciech Matusik. Image-driven Navigation of Analytical BRDF Models. To appear, Eurographics Symposium on Rendering 2006. [preprint PDF]

|

|

Experimental Analysis of BRDF ModelsA variety of analytical models have been proposed to represent BRDFs. However, validation of these models have been scarce due to the lack of high resolution measured data. Building upon a recent dataset of over a hundred BRDFs acquired at high resolution, we evaluate the performance of several popular BRDF models in terms of their ability to fit the measured data. While previous work in BRDF modeling have validated their models with some measurements, our work is the first to quantitatively compare different models based on one sizable dataset covering a wide class of materials. We believe this work can serve as a guide for practitioners in the field of appearance modeling to choose the right model that suits their needs.

|

Measurement-Based Skin Reflectance Model

|

|

|

Image-based 3D Scanning System using Opacity HullsWe have built a system for acquiring and displaying high quality graphical models of objects that are impossible to scan with traditional scanners. Our system can acquire highly specular and fuzzy materials, such as fur and feathers. The hardware set-up consists of a turntable, two plasma displays, an array of cameras, and a rotating array of directional lights. We use multi-background matting techniques to acquire alpha mattes of the object from multiple viewpoints. The alpha mattes are used to construct an opacity hull. The opacity hull is a new shape representation, defined as the visual hull of the object with view-dependent opacity. It enables visualization of complex object silhouettes and seamless blending of objects into new environments. Our system also supports relighting of objects with arbitrary appearance using surface reflectance fields, a purely image-based appearance representation. Our system is the first to acquire and render surface reflectance fields under varying illumination from arbitrary viewpoints.

|

|

Acquisition and Rendering of Transparent and Refractive ObjectsThis paper introduces a new image-based approach to capturing and modeling highly specular, transparent, or translucent objects. We have built a system for automatically acquiring high quality graphical models of objects that are extremely difficult to scan with traditional 3D scanners. We use multi-background matting techniques to acquire alpha and environment mattes of the object from multiple viewpoints. Using the alpha mattes we reconstruct an approximate 3D shape of the object. We use the environment mattes to compute a high-resolution surface reflectance field. We also acquire a low-resolution surface reflectance field using the overhead array of lights. Both surface reflectance fields are used to relight the objects and to place them into arbitrary environments. Our system is the first to acquire and render transparent and translucent 3D objects, such as a glass of beer, from arbitrary viewpoints under novel illumination. W. Matusik, H. Pfister, R. Ziegler, A. Ngan, L. McMillan. Acquisition and Rendering of Transparent and Refractive Objects. Proceedings of 13th Eurographics Workshop on Rendering. Pisa, Italy, June 2002. (Videos, Slides)

|

|

Freeform 3D Model SketchingSubdivision surfaces are extremely suitable for our sketching system because of its support for arbitrary topology, its natural smoothing through refinement, its ability to model creases and corners, etc. Freeform Sketch is a direct modeling system we develop that utilize gestural input for both modeling commands and geometric descriptions. It is part of an effort to greatly simplify the modeling process, by sacrificing some precise control on the surface. However, with a support of some basic primitives, in addition to editing tools including oversketching, trimming and joining, the class of shapes we are able to model exceed that of previous work, with higher quality. One main focus of the thesis is on the trimming operation, which is non-trivial for subdivision surface. We propose an algorithm that support approximate trimming through remeshing and fitting, while adding no extra details to the surface.Addy Ngan. Subdivision Models in a Freeform Sketching System. Undergraduate Thesis, Princeton University, 2001.

|

|

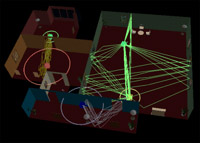

Beam Tracing Method for Interactive Architectural AcousticsA difficult challenge in geometrical acoustic modeling is computing propagation paths from sound sources to receivers fast enough for interactive applications. Our paper describes a beam tracing method that enables interactive updates of propagation paths from a stationary source to a moving receiver. During a precomputation phase, we trace convex polyhedral beams from the location of each sound source, constructing a “beam tree” representing the regions of space reachable by potential sequences of transmissions, diffractions, and specular reflections at surfaces of a 3D polygonal model. Then, during an interactive phase, we use the precomputed beam trees to generate propagation paths from the source(s) to any receiver location at interactive rates. The key features of our beam tracing method are: 1) it scales to support large architectural environments, 2) it models propagation due to wedge diffraction, 3) it finds all propagation paths up to a given termination criterion without exhaustive search or risk of under-sampling, and 4) it updates propagation paths at interactive rates. We demonstrate use of this method for interactive acoustic design of architectural environments.

|

Last Updated 06/06/2006