| Abe Davis1 | Michael Rubinstein2,1 | Neal Wadhwa1 | Gautham Mysore3 | Frédo Durand1 | William T. Freeman1 |

| 1MIT CSAIL | 2Microsoft Research | 3Adobe Research |

Abstract

When sound hits an object, it causes small vibrations of the object’s surface. We show how, using only high-speed video of the object, we can extract those minute vibrations and partially recover the sound that produced them, allowing us to turn everyday objects—a glass of water, a potted plant, a box of tissues, or a bag of chips—into visual microphones. We recover sounds from high-speed footage of a variety of objects with different properties, and use both real and simulated data to examine some of the factors that affect our ability to visually recover sound. We evaluate the quality of recovered sounds using intelligibility and SNR metrics and provide input and recovered audio samples for direct comparison. We also explore how to leverage the rolling shutter in regular consumer cameras to recover audio from standard frame-rate videos, and use the spatial resolution of our method to visualize how sound-related vibrations vary over an object’s surface, which we can use to recover the vibration modes of an object.

@article{Davis2014VisualMic,

author = {Abe Davis and Michael Rubinstein and Neal Wadhwa and Gautham Mysore

and Fredo Durand and William T. Freeman},

title = {The Visual Microphone: Passive Recovery of Sound from Video},

journal = {ACM Transactions on Graphics (Proc. SIGGRAPH)},

year = {2014},

volume = {33},

number = {4},

pages = {79:1--79:10}

}

Paper: PDF

** Patent pending

Supplementary material: link

SIGGRAPH presentation: zip (ppt + videos; 340MB)

| "Seeing" sound | |

| Code and data are now available for download |

Contact

For more information, please contact Abe Davis and Michael Rubinstein

Talks

|

||

| New video technology that reveals an object's hidden properties Abe Davis @ TED, March 2015 |

See invisible motion, hear silent sounds Michael Rubinstein @ TEDxBeaconStreet, Nov 2014 |

Press

|

|

|

|

|

|

|

|

|

|

Sounds Recovered from Videos

Below are some examples of sounds we recovered just from high-speed

videos of objects. We will add more results soon. In the meantime, you can

check out our video and supplementary material above.

Our audio results are best experienced using good speakers, preferably headphones.

(The reason we used "Mary Had a Little Lamb" in several of our demos: The Internet Archive)

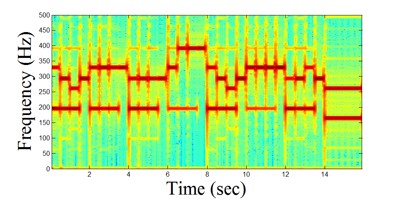

MIDI music recovered from a video of a bag of chips, and from a video of a plant:

| Video (representative frame) | Source sound | Sound recovered from video |

|

|

|

| 700 x 400, 2200 Hz | ||

| Video (representative frame) | Source sound | Sound recovered from video |

|

|

|

| 700 x 400, 2200 Hz |

Speech recovered from a video of a small patch on a bag of chips lying on a table in a room:

| Video (representative frame) | Source sound | Sound recovered from video |

|

|

|

| 192 x 192, 20000 Hz |

A child's singing recovered from a video of a foil wrapper of a candy bar:

| Video (representative frame) | Source sound (0-8kHz) | Sound recovered from video (after manual denoising in Adobe Audition) |

|

|

|

| 480 x 480, 6000 Hz |

We can use our motion magnification technique to visualize motions in objects induced by sound.

In the following video we magnified motions in a bag of chips caused by music played close to it. Each motion-magnified video shows motions within a narrow temporal frequency band corresponding to one of the four dominant tones in the music. We highlight each motion-magnified video when its corresponding note is played. (all the videos are playing throughout the clip, but motions at each frequency are visible only when the corresponding note is playing)

| Download: .mov (20MB) |

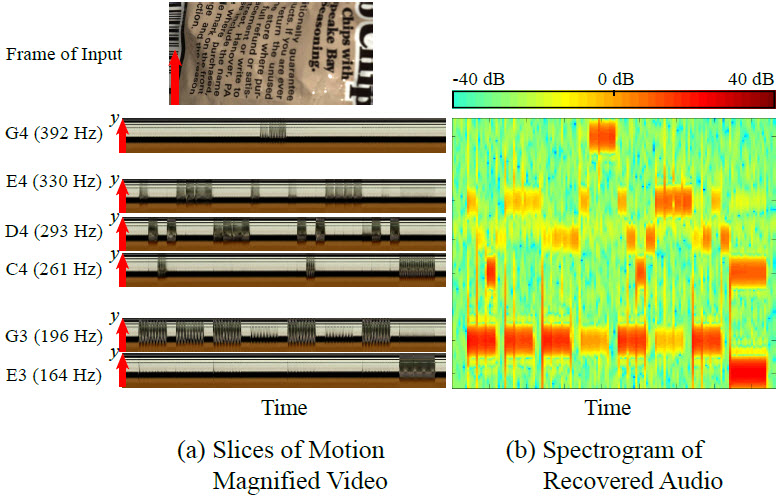

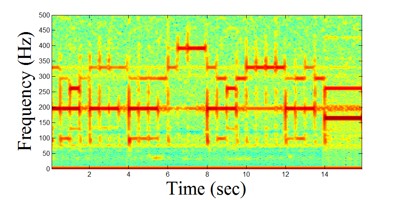

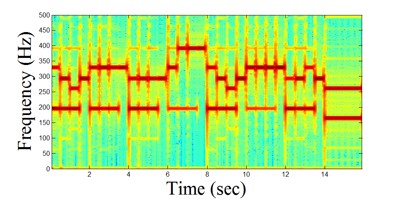

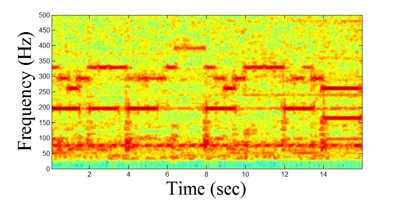

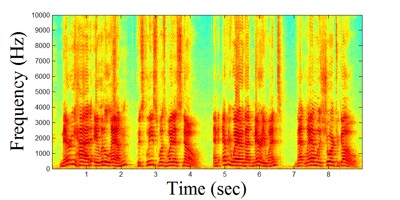

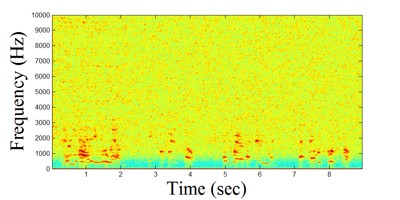

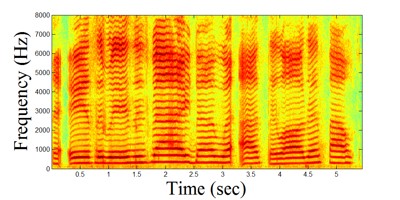

By taking space-time slices from the motion-magnified videos of all six notes (the top four of which are shown in the video above), we can produce a "visual spectrogram" -- a representation of the sound based on the visual signal:

Matlab code - See ScriptTestVideoToSound.m for details.

** Note: This work is patent-pending, owned by MIT.

FAQs

Thank you for your interest in our work. Here are some answers to questions we have been getting frequently:

Can you recover sound from old silent movies?

In general, the video frame rate has to be higher than the sound (motion) frequency you'd like to recover. While we haven't studied old movies carefully, because of their low frame-rates (12-26 fps according to Wikipedia) and low image quality, we do not believe they have enough visual information for our technique to recover sound.

That said, we did however show in our paper that it is possible to recover sound frequencies higher than the frame rate of a video, if the video was taken with a modern camera (i.e. videos that are missing audio). This can be done by exploiting the “rolling shutter” – a mechanism that modern image sensors commonly use to capture images. There is a short explanation about this in our video, and a more detailed explanation in our paper (Section 6). In that case, some audible sounds could potentially be recovered from silent videos under some conditions (see answer to "How far away can the camera be to recover sound?", below).

Hasn't this been done before with lasers?

Indeed (see e.g. the Laser Microphone), but what we showed is that it is often possible to recover sound from surface vibration without the use of a laser. This is the reason we call this a passive technique to recover remote sound -- we do not need to actively shine laser light on the object, but rather just observe the light that is already in the scene (assuming the scene has enough light). There is more discussion on the difference between our technique and laser vibrometry in our paper.

How far away can the camera be to recover sound?

This question is hard to answer with a single number. That's because our ability to recover sound with a camera depends on several factors, such as the material of the object used to recover the sound, the texture on the object's surface, the volume of the sound, the lighting in the scene, and the optical magnification. In Section 5 in our paper we gave a recipe that, given those various parameters, gives a prediction of the quality (in terms of SNR) of the recovered sound, based on several objects and sounds we have tested.

Where can I find the code?

While we cannot give the exact time when the code will be available, we are working on releasing it and will try to make it available soon. Please stay tuned and check this webpage from time to time.

Related Publications

We have been working for a couple of years now on techniques to analyze subtle color and motion signals in videos. Check out our previous work:

Riesz Pyramids for Fast Phase-Based Video Magnification, ICCP 2014

Analysis and Visualization of Temporal Variations in Video, Michael Rubinstein, PhD Thesis, MIT Feb 2014

Phase-Based Video Motion Processing, SIGGRAPH 2013

Eulerian Video Magnification for Revealing Subtle Changes in the World, SIGGRAPH 2012

Acknowledgements

We thank Justin Chen for his helpful feedback, Dr. Michael Feng and Draper Laboratory for lending us their Laser Doppler Vibrometer, and the SIGGRAPH reviewers for their comments. We acknowledge funding support from QCRI and NSF CGV-1111415. Abe Davis and Neal Wadhwa were supported by the NSF Graduate Research Fellowship Program under Grant No. 1122374. Abe Davis was also supported by QCRI, and Neal Wadhwa was also supported by the MIT Department of Mathematics. Part of this work was done when Michael Rubinstein was a student at MIT, supported by the Microsoft Research PhD Fellowship.

Last updated: Apr 2016