Differentiable Rendering of Neural SDFs through Reparameterization

ACM SIGGRAPH Asia 2022 (Conf.)

MIT CSAIL

Adobe Research

UC San Diego

Adobe Research

MIT CSAIL

Abstract

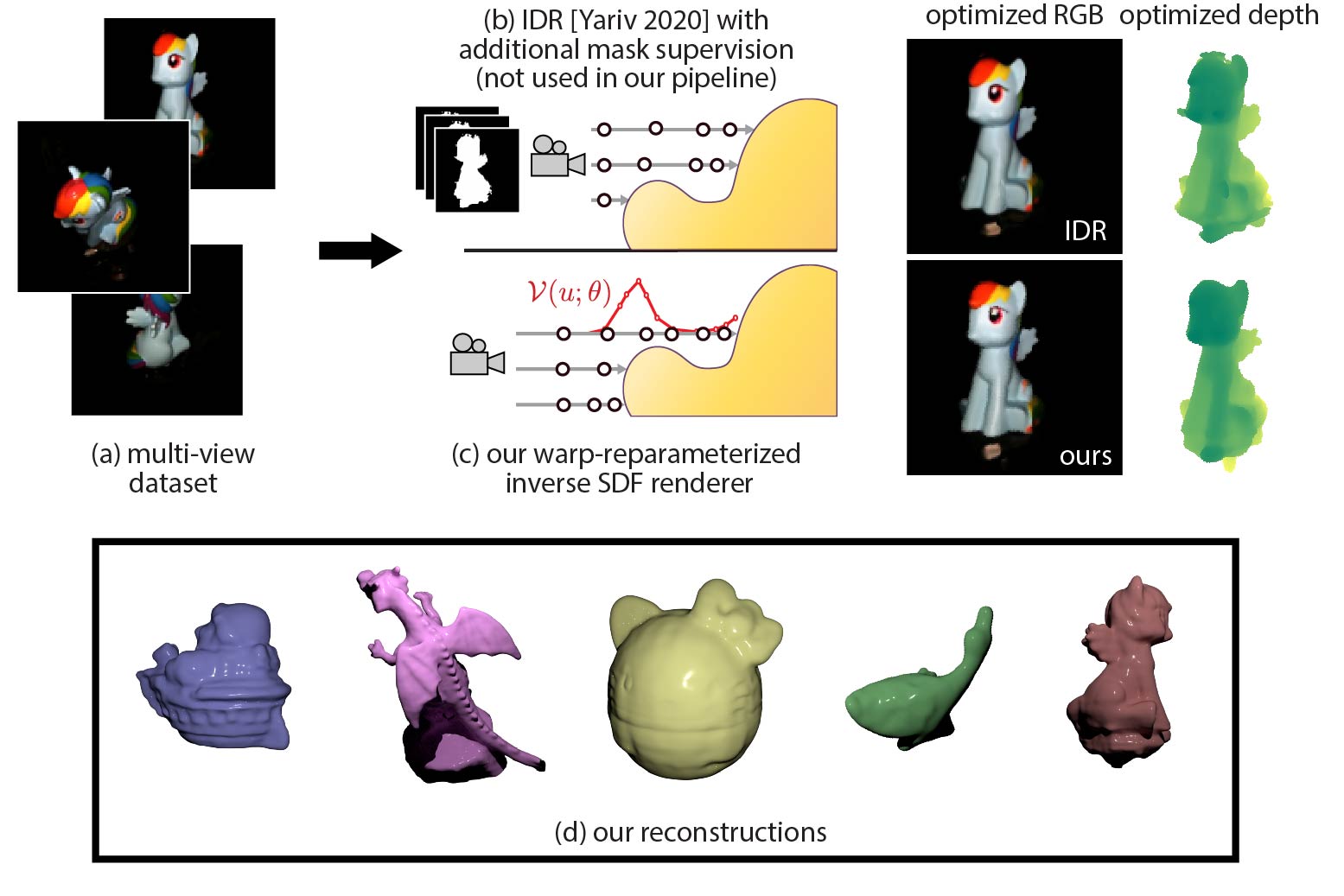

We present a method to automatically compute correct gradients with respect to geometric scene parameters in neural SDF renderers. Recent physically-based differentiable rendering techniques for meshes have used edge-sampling to handle discontinuities, particularly at object silhouettes, but SDFs do not have a simple parametric form amenable to sampling. Instead, our approach builds on area-sampling techniques and develops a continuous warping function for SDFs to account for these discontinuities. Our method leverages the distance to surface encoded in an SDF and uses quadrature on sphere tracer points to compute this warping function. We further show that this can be done by subsampling the points to make the method tractable for neural SDFs. Our differentiable renderer can be used to optimize neural shapes from multi-view images and produces comparable 3D reconstructions to recent SDF-based inverse rendering methods, without the need for 2D segmentation masks to guide the geometry optimization and no volumetric approximations to the geometry.

Downloads

BibTeX

Acknowledgements

This work was partially completed during an internship at Adobe Research and subsequently funded by the Toyota Research Institute and the National Science Foundation (NSF 2105806). We acknowledge the MIT SuperCloud and the Lincoln Laboratory Supercomputing Center for providing HPC resources. We also thank Shuang Zhao for early discussions, and Yash Belhe for proof-reading.