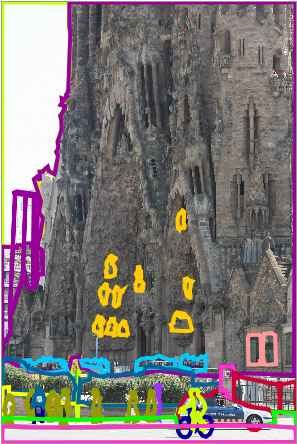

Train in Spain and test in the rest

of the world

Try to recognize and segment as many object categories as you can. Training

images correspond to outdoor pictures taken in different cities of Spain.

Characteristics of the dataset:

| | Images | Objects | Cars | Person |

Building | Road | Sidewalk | Sky | Tree |

| Training set | 2920 | 32164 | 4441 | 2524 |

3004 | 1321 | 1272 | 1009 | 2652 |

| Test set | 1133 | 32853 | 2265 | 2119 |

2117 | 739 | 1107 | 823 | 1652 |

Training set: contains more than 1000 fully annotated images and around

2000 partially annotated images. Including partially annotated images

allows algorithms to show if they are able to benefit from additional

partially labeled images. As we try to build large datasets, it will be

common to have many images that are only partially annotated, therefore,

developing algorithms and training strategies that can cope with this

issue will allow using large datasets without having to make the labor

intensive effort of careful image annotation.

Test set: it only contains images that are fully labeled. The test set

corresponds to images taken from the rest of the world which guarantees

that images will be quite different between training and test.

Challenges:

Many object classes have very few training samples. The distribution

of counts is very heavy tailed. There is a dozen of object classes with

thousands of training samples, and there are hundreds of object classes

with just a handful of training samples.

Dealing with partially labeled training images.

There is a large range of quality of the annotations. From each polygon

you can extract a very good bounding box. But for many objects you can

also get a quite accurate segmentation.

Release October 22, 2008:

training.tar.gz

(5.8 Gbytes) | thumbnails

| list of training categories

test.tar.gz

(1.8 Gbytes) | thumbnails

| list of test categories

Use the LabelMe toolbox to read the annotations and to extract segmentation

masks.

Send us your comments.

Citation: LabelMe:

a database and web-based tool for image annotation. B. Russell, A.

Torralba, K. Murphy, W. T. Freeman. International Journal of Computer

Vision, 2007. |