Differentiable Programming for

Image Processing and Deep Learning

in Halide

| Tzu-Mao Li | Michaël Gharbi | Andrew Adams | Frédo Durand | Jonathan Ragan-Kelley |

| MIT CSAIL | MIT CSAIL | Facebook AI Research | MIT CSAIL |

UC Berkeley |

Code merged to main Halide repo!

Abstract

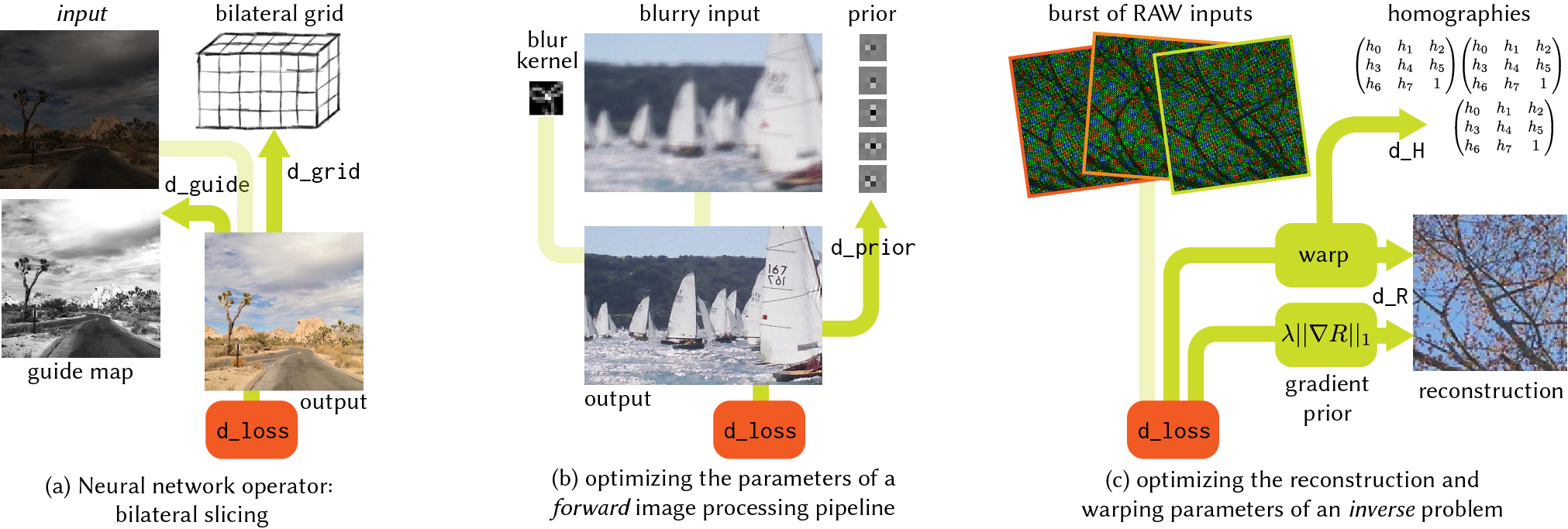

Gradient-based optimization has enabled dramatic advances in computational imaging through techniques like deep learning and nonlinear optimization. These methods require gradients not just of simple mathematical functions, but of general programs which encode complex transformations of images and graphical data. Unfortunately, practitioners have traditionally been limited to either hand-deriving gradients of complex computations, or composing programs from a limited set of coarse-grained operators in deep learning frameworks. At the same time, writing programs with the level of performance needed for imaging and deep learning is prohibitively difficult for most programmers.

We extend the image processing language Halide with general reversemode automatic differentiation (AD), and the ability to automatically optimize the implementation of gradient computations. This enables automatic computation of the gradients of arbitrary Halide programs, at high performance, with little programmer effort. A key challenge is to structure the gradient code to retain parallelism. We define a simple algorithm to automatically schedule these pipelines, and show how Halide’s existing scheduling primitives can express and extend the key AD optimization of "checkpointing."

Using this new tool, we show how to easily define new neural network layers which automatically compile to high-performance GPU implementations, and how to solve nonlinear inverse problems from computational imaging. Finally, we show how it enables dramatically improving the quality of even traditional, feed-forward image processing algorithms, blurring the distinction between classical and deep methods.

Publication

Tzu-Mao Li, Michaël Gharbi, Andrew Adams, Frédo Durand, Jonathan Ragan-Kelley.

Differentiable Programming for Image Processing and Deep Learning in Halide.

ACM Transactions on Graphics 37(4) (Proceedings of ACM SIGGRAPH 2018)

BibTeX

Downloads

Code

https://github.com/halide/Halide

Acknowledgement

This work is partially funded by Toyota, the Intel Science and Technology Center for Agile Design, ADEPT Lab industrial sponsors Google, Siemens, and SK Hynix, and the NSF/Intel Partnership on Computer Assisted Programming for Heterogeneous Architectures through grant CCF-1723445.