I am excited to develop artificial 3D perception systems at Facebook Reality Labs. We are building perception and AI systems to create LiveMaps a shared virtual map of spaces to support the next generation of AR.

In spring 2017, I defended my PhD thesis on Nonparametric Directional Perception. My advisers at MIT within the CS and AI Laboratory (CSAIL) were John W. Fisher III and John Leonard. On my way to MIT, I graduated from the Technische Universität München (TUM) with a Diplom and the Georgia Institute of Technology with a M.Sc.

My research interests in AI and robotics are in 3D perception [1, 3, 4, 8, 9, 10, 11, 12, 13], modeling (directional data [1, 2, 11, 13] and Bayesian nonparametrics [5, 7, 11, 13]), and inference (sampling [1, 2, 4, 13], optimization [6, 7], low-variance asymptotics [3], and global search [11]).

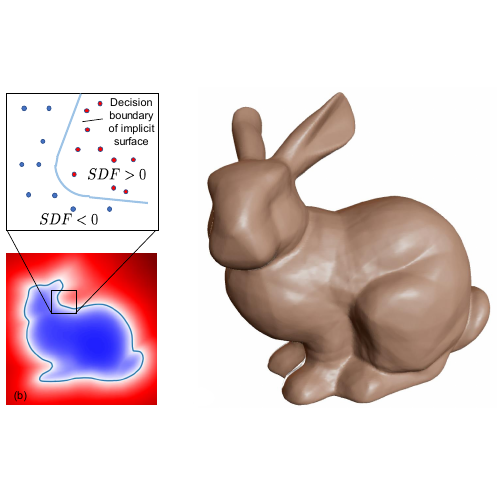

DeepSDF

Latent representation for continuous signed distance functions to represent 3D shapes.

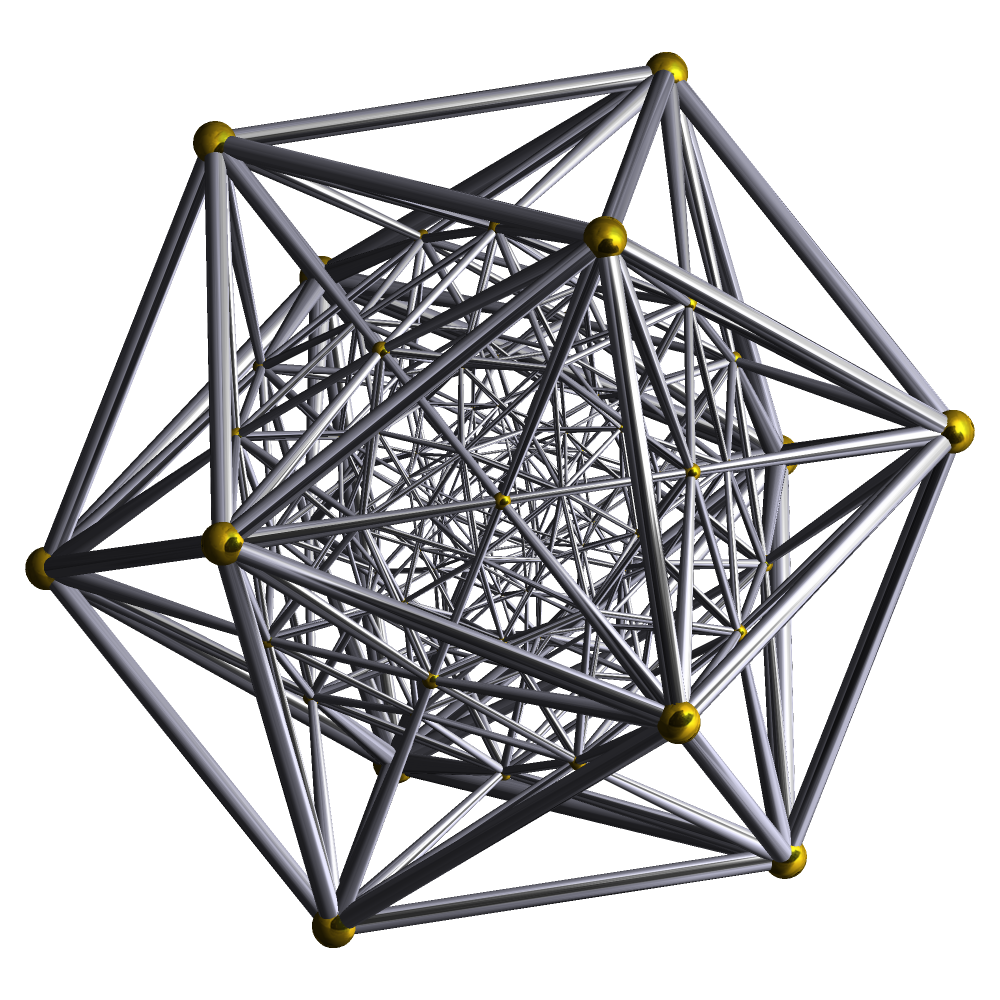

PhD Thesis

Nonparmetric Directional Perception captures and uses regularities of man-made environments revealed in their surface normal distribution.

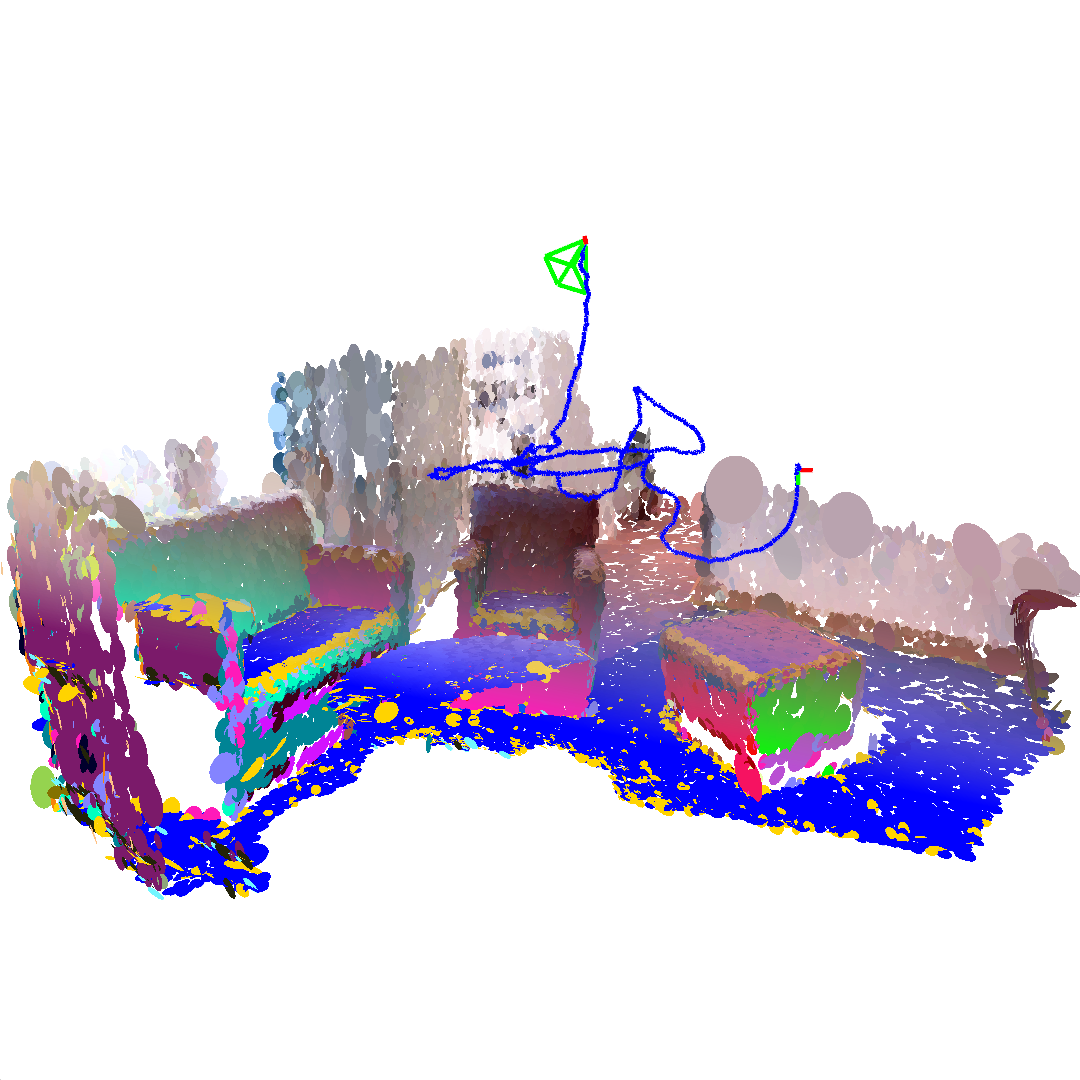

Alignment

Efficient global pointcloud alignment via nonparametric mixtures and branch-and-bound search over rotation and translation space.

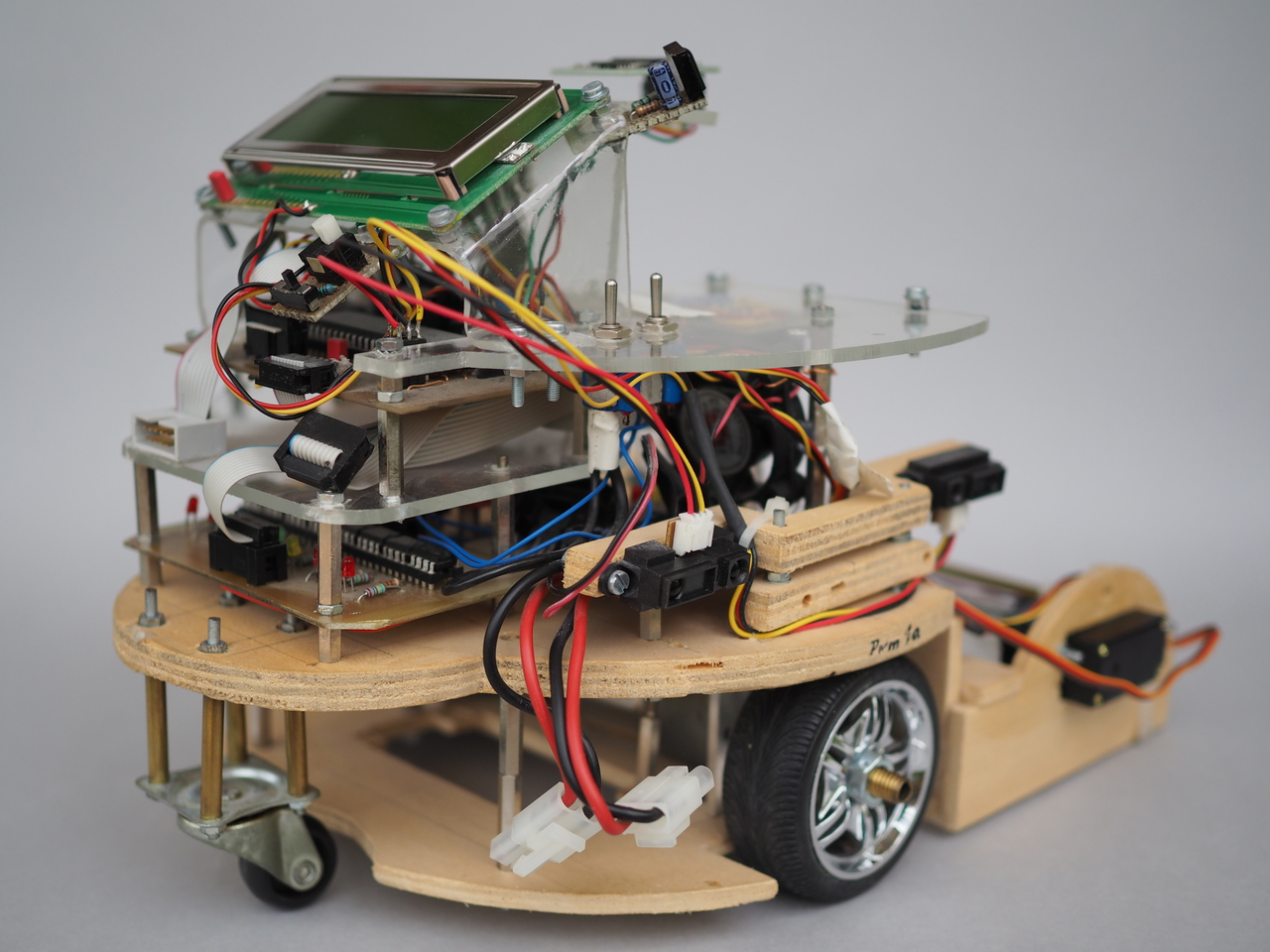

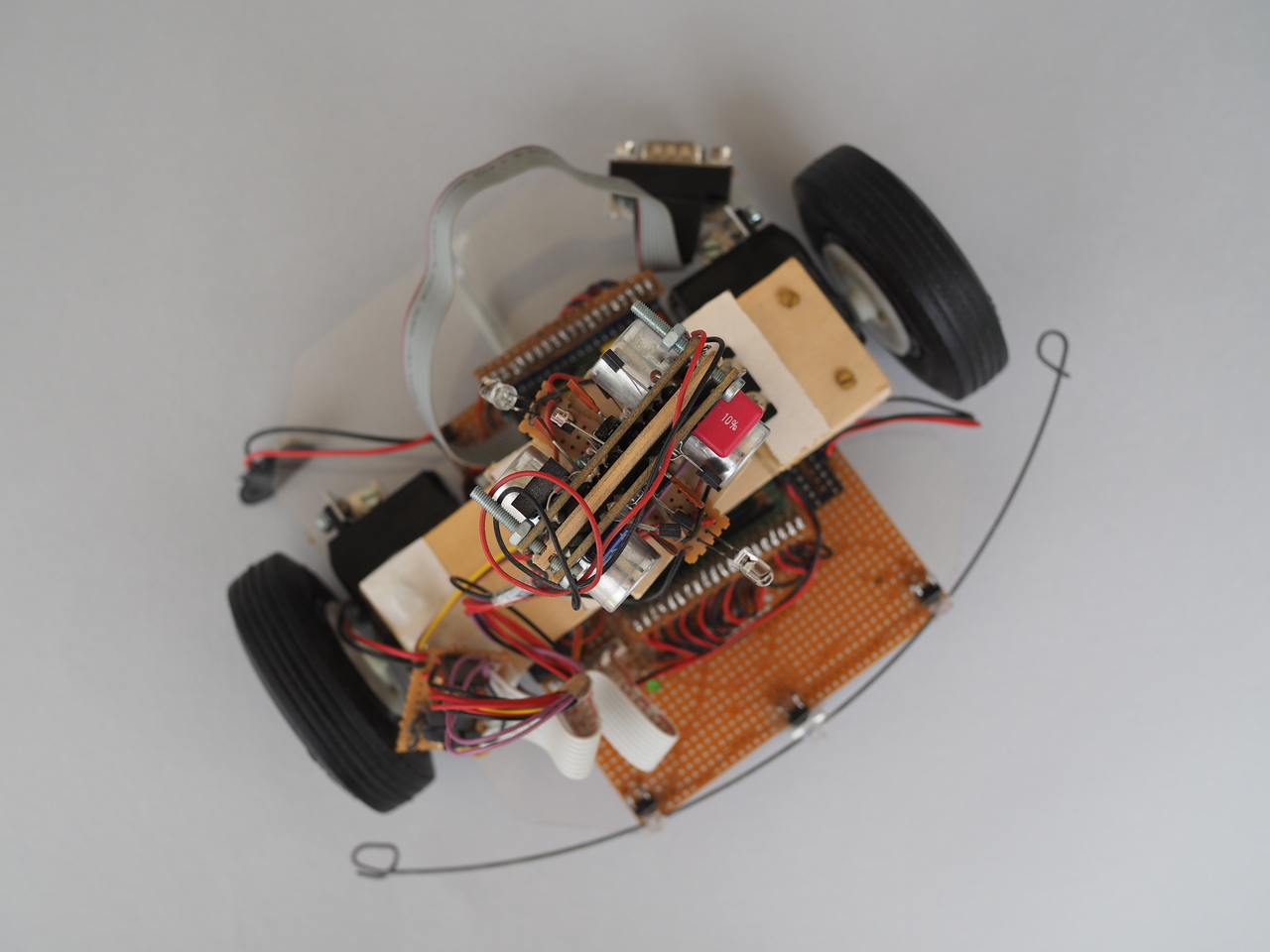

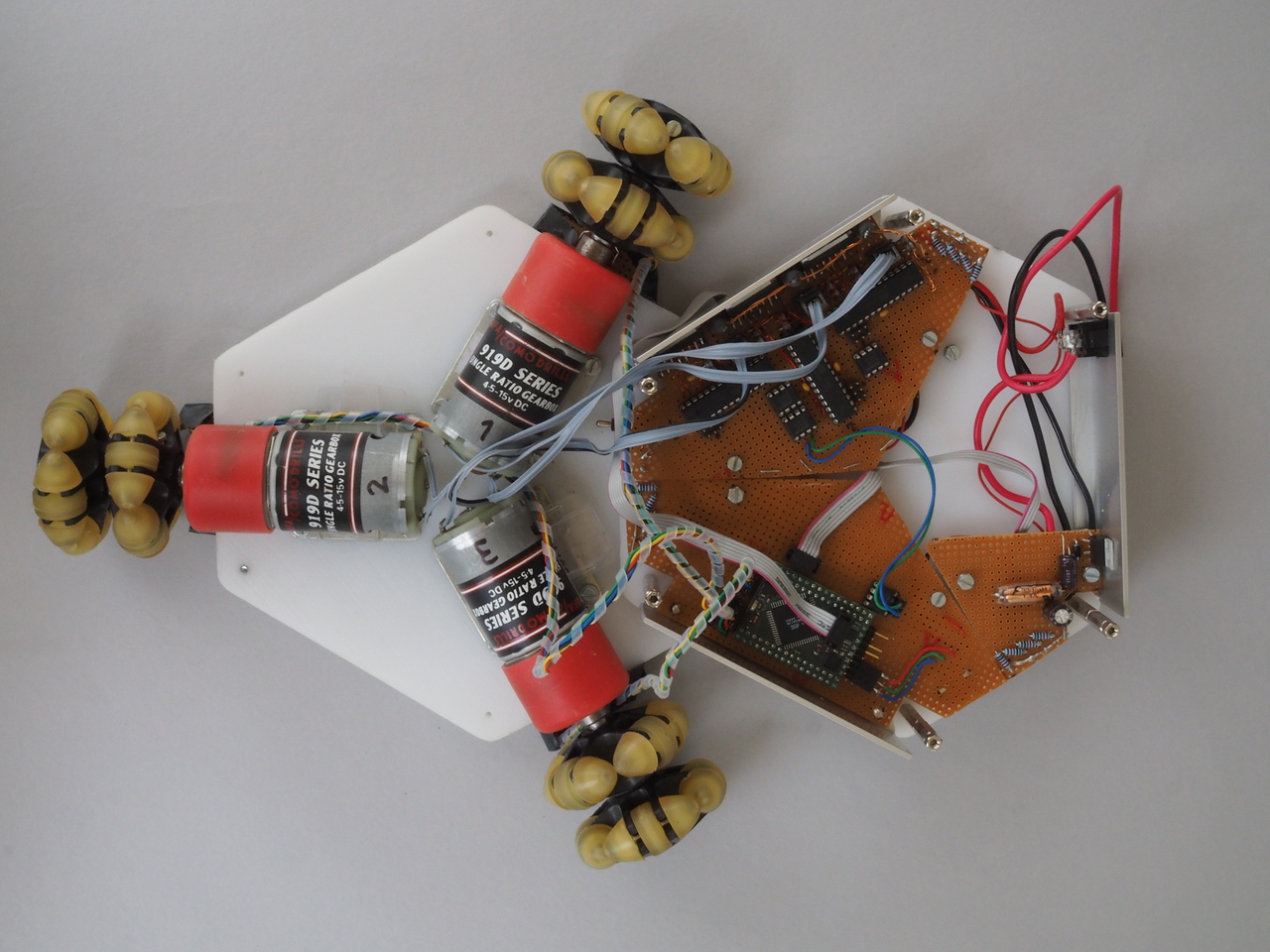

My interest in robotics and perceiving systems originates from age 15, when I got my first micro-processor from my father as a present. Since then I have built seven robots (Plexa, Plicro, Roboking2005, Ca3505, Kno0Bot, Kno2Bot, Holomove) from scratch and worked in multiple teams on robotics related projects (KUKAyouBot, rEIzor).